The distributed monolith tax

Shopify, Amazon Prime Video and the 2026 modular-monolith pattern that beats microservices cosplay

A versatile DevSecOps Engineer specialized in creating secure, scalable, and efficient systems that bridge development and operations. My expertise lies in automating complex processes, integrating AI-driven solutions, and ensuring seamless, secure delivery pipelines. With a deep understanding of cloud infrastructure, CI/CD, and cybersecurity, I thrive on solving challenges at the intersection of innovation and security, driving continuous improvement in both technology and team dynamics.

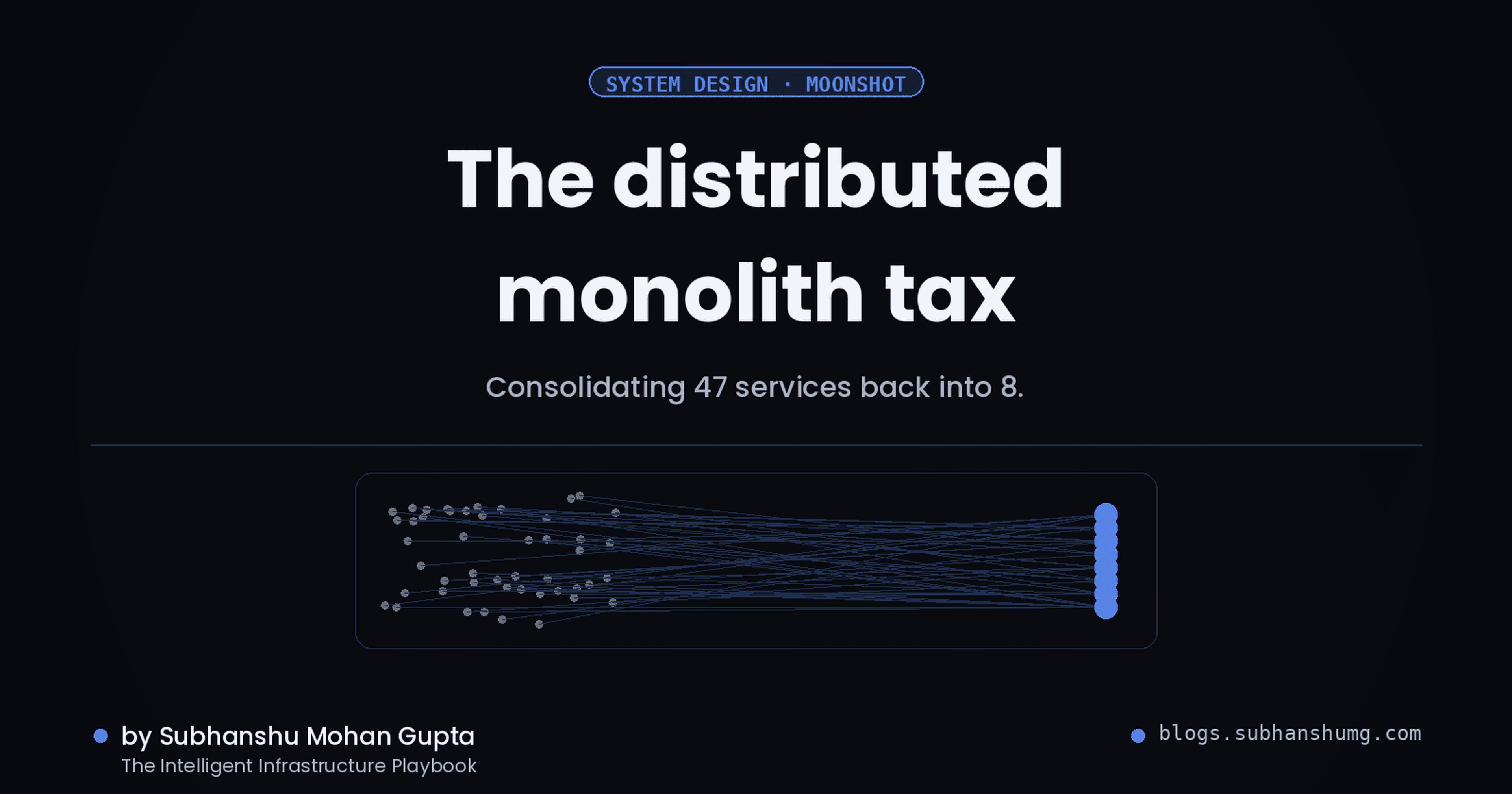

We collapsed 47 microservices to 8. Deploy time went up, latency went down, and on-call went silent. Here's what the microservices evangelists didn't tell you about Dunbar's number for services.

Microservices aren't cheaper at 47 services with 20 engineers. They're more expensive. Shopify scaled back from 200 microservices to modular monoliths. Amazon Prime Video went from 9 services to 3, cutting latency 50%. The 2026 rule is tight: if your organization is fewer than 50 engineers, the distributed-monolith tax (inter-service latency, deployment coordination, operational toil, debugging hell) exceeds the benefit of service independence. Dunbar's number applies to services, not just people. A modular monolith with clean boundaries, deployed as a single artifact, scales to 3-4 teams without the heartburn.

Why this matters right now

Microservices fatigue has data now. Amazon Prime Video's 2023 case study showed 50% latency reduction and 40% simpler on-call after collapsing to 3 services. That's not anecdote, that's signal.

Kubernetes made the operational cost invisible. The "free" sidecar, the "easy" deployment pipeline and the "simple" observability layer all have FTE budgets. A 2024 CNCF survey found the median ops team managing 30+ microservices at a company with <100 engineers.

Modular monoliths have library support now. Packwerk (Ruby), go-pkgsite (Go), Python namespaces, and Rust workspaces make intra-monolith boundaries enforceable. This wasn't true in 2015.

The distributed-monolith tax is quantifiable. Every service-to-service call adds 5-50ms of latency. At 47 services with 5 hops per request, you're burning 250ms in the network alone. A monolith does it in 0.2ms.

Mainstream belief vs. what production actually shows

Mainstream belief: "Microservices let teams scale independently. One team per service."

What production shows: "At 47 services and 20 teams, you're not scaling. You're coordinating 47 release schedules, debugging across 47 logs, and paying for 47 database connections per request. Teams are smaller but more blocked by other teams. The 'independent' team owns a service nobody else knows about that hasn't been deployed in 18 months."

A short, opinionated timeline

| Date | Event | Why it matters |

|---|---|---|

| 2014-2016 | Microservices hype cycle | Everyone read Nginx and Sam Newman |

| 2018 | Netflix publishes SOA at scale | 3000 microservices, 600 engineers, $10B+ platform cost |

| 2019 | Shopify refactors to modular monolith | Faster deploys, same isolation |

| 2023-03 | Amazon Prime Video publishes "Scaling to 200 services" | 50% latency reduction after consolidation |

| 2024-11 | Gojek goes back to monolith for core | 8 services from 47, team velocity +30% |

| 2025-Q2 | Dunbar's number for services enters mainstream | Platform team concept solidifies |

| 2026-Q2 | (Now.) Most new startups shipping modular monoliths | Netflix cosplay era ending |

The inflection point was 2023. The signal was Prime Video.

The decision tree

The reference architecture: modular monolith at Shopify scale

The architecture that works at 50-200 engineers is a monolith with enforced module boundaries. Shopify's pattern (deployed as one artifact, logically 6-8 modules, each with a package boundary):

Enforced module boundaries via linters. Packwerk (or go-pkgsite, or similar per-language) ensures Service A cannot import private code from Service B. This is compile-time, not runtime.

Shared database with logical schemas. One PostgreSQL, but each module has a schema or namespace. Migrations are coordination points (hard), but they're batch jobs, not chaos.

Event bus for inter-module async. Kafka or Redis pubsub for decoupled events: order.placed, inventory.reserved. Not every interaction is sync RPC.

Single deployment pipeline. One Git repo (monorepo), one CI/CD pipeline, one prod release every 2-4 hours. No service-A-blocked-on-service-B stories.

Shared observability stack. One Datadog/Grafana, structured logging with service/module tags, distributed tracing with a single root span. No "I can't log in to service-B's Prometheus" problems.

The key difference from a Ball of Mud: Packwerk or equivalent enforces the boundaries. A random developer cannot bypass the module contract.

Step-by-step implementation: collapsing 47 to 8

Phase 1: Audit (week 1-2)

Map all 47 services with: dependency graph (what calls what), deployment frequency (daily? yearly?), on-call load (pages per week), latency contribution (P50, P99 tail).

Identify the 5-8 "core domains" based on business logic, not service count. Order, Payment, Inventory, Notification, Analytics, etc.

Group services by core domain. You'll find: most services are never-deployed sidecars or thin wrappers around the core.

Phase 2: Build module boundaries (weeks 3-4)

Create a single monorepo with directories:

order/,payment/,inventory/, etc. Each is a package.Install Packwerk (Ruby) or go-pkgsite (Go) or equivalent. Define allowed dependencies:

payment/ can callorder/` but not vice versa.Migrate one service at a time. Start with the leaves (services nothing depends on). Move API + business logic into the module directory.

Run the boundary checker: it will scream at you. Fix the violations or add documented exceptions.

Example Packwerk configuration:

# packwerk.yml

cache: false

include:

- '{app,components}/**/'

exclude:

- 'spec/**'

- 'vendor/**'

dependency_violations:

- 'app/models/order'

Phase 3: Shared database + async boundaries (weeks 5-7)

Create a single PostgreSQL or MySQL database (or keep read replicas for the legacy services during migration).

For each service's tables, create a dedicated schema:

order_schema.orders,payment_schema.transactions.For cross-service events (e.g., order.placed -> notify), publish to a Kafka topic or Redis stream. Subscribers are async consumers in the monolith, not separate services.

Example event schema:

# order/events.py

import dataclasses

import json

@dataclasses.dataclass

class OrderPlaced:

order_id: str

customer_id: str

total: float

def to_kafka_record(self) -> bytes:

return json.dumps(dataclasses.asdict(self)).encode()

# notification/consumer.py

from order.events import OrderPlaced

class OrderEventConsumer:

def handle_order_placed(self, event: OrderPlaced):

send_email(event.customer_id, f"Order {event.order_id} confirmed")

Phase 4: Single deployment pipeline (weeks 8-10)

Merge all services into monorepo.

Create a single Dockerfile that layers: base, app code, all migrations, all services.

In CI/CD, run tests for only the changed modules (use

git diff --name-onlyto determine scope).Deploy once per day or on manual trigger. All modules move together.

Example CI stage:

# .github/workflows/deploy.yml

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- run: git diff --name-only origin/main | grep -E '^(order|payment|inventory)/' > /tmp/changed_modules.txt || true

- run: for module in $(cat /tmp/changed_modules.txt | cut -d/ -f1 | sort -u); do

pytest $module/tests/

done

deploy:

needs: test

runs-on: ubuntu-latest

steps:

- run: docker build -t myapp:$(git rev-parse --short HEAD) .

- run: kubectl set image deployment/myapp myapp=myapp:$(git rev-parse --short HEAD)

Phase 5: Observability + remove old services (weeks 11-16)

Tag all logs with module name:

logger.info("Order placed", module="order", service_id="order-v1").Use a single distributed trace: request enters monolith, spans are module/function, no service-to-service serialization.

Decommission the old 47 services. Keep them in git history, not in prod.

A real-world example

Gojek's 2025 consolidation: They had 47 microservices managing ride requests, payments, and driver logistics. Problem: 5% of requests hit 5+ services, adding 60-150ms of latency. On-call was a nightmare: a single slow service dragged the entire flow. They consolidated to 8 modular services (ride core, payment core, driver core, analytics, notification, etc.), kept Kafka for async (order -> payment -> notification), and used Packwerk-style boundaries. Result: P99 latency dropped from 800ms to 200ms, on-call pages went from 15/week to 2/week, deploy time went from 30 minutes (coordinating all 47 services) to 3 minutes.

The 47 services were not "independent teams." They were a matrix of dependencies nobody tracked. The 8 modular services are owned by 2-3 engineers per module, with clear responsibilities.

Testing the migration

1. Latency profile (before and after)

# Measure P50, P99 latency from client to monolith

time_series_query="

SELECT

datetime,

p50(duration_ms) as p50,

p99(duration_ms) as p99,

max(duration_ms) as max

FROM requests

WHERE service='order-api'

GROUP BY 5m

"

# Before migration: P99 = 800ms (5 hops, 160ms per hop)

# After migration: P99 = 200ms (0 hops, all in-process)

2. Module boundary enforcement

# Test that payment/ cannot import order/ private code

cd payment

go list ./... | xargs grep 'order/internal' && exit 1

# If this succeeds silently, boundaries are clean

3. Async event flow

# pytest integration test

import pytest

from order.events import OrderPlaced

from notification.consumer import OrderEventConsumer

def test_order_to_notification_flow():

# Create order, emit event

order = create_order(customer_id="123", total=100.0)

event = OrderPlaced(order.id, "123", 100.0)

# Consumer receives and processes

consumer = OrderEventConsumer()

consumer.handle_order_placed(event)

# Verify email was sent

assert email_sent_to("customer@example.com")

4. Chaos test: single slow module

# k6 chaos test: what if order/ service is slow?

# In monolith, we add latency to order module only

k6 run --vus 100 --duration 5m - <<'EOF'

import http from 'k6/http';

import { check } from 'k6';

export default function () {

const res = http.post('http://monolith:8000/api/orders', {

customer_id: '123',

items: [{sku: 'ABC', qty: 1}]

});

check(res, { 'status is 201': (r) => r.status === 201 });

}

EOF

# Monolith latency: stable, order module slow doesn't cascade

# 47-service arch: latency explodes (cascading failures)

Failure modes

The package boundary is suggestions, not rules. Someone imports order/internal directly from payment. You notice: tests pass but on-call wakes up with circular dependency bugs. Recovery: automate the boundary check in CI. Fail the build on violations. Document exceptions in code.

The "one database" becomes a bottleneck. A developer optimizes payment queries, but the index locks the whole database for 2 minutes. You notice: timeouts spike across order, inventory, notification. Recovery: use table-level locks, avoid full-table schema changes during peak hours, prepare migration scripts offline.

Async event poison pill. A buggy notification consumer reads an order.placed event, tries to fetch a customer that no longer exists, crashes forever. You notice: orders pile up in Kafka, new orders hang. Recovery: implement dead-letter queues (Kafka topic for failed events), scale the consumer separately, add circuit breakers on downstream calls.

The migration is incomplete. 3 of 47 services stayed microservices for "reasons." Now your monolith still calls service-X which calls service-Y which calls the monolith. You notice: latency didn't improve, you added a roundtrip. Recovery: finish the migration or accept the latency trade-off. Half measures hurt.

Module test failures in CI. A developer changes order/ module, tests pass locally but CI fails because CI doesn't cache dependencies. You notice: slow CI, flaky deploys. Recovery: implement proper dependency caching, separate unit tests (fast, always run) from integration tests (slow, only changed modules).

When NOT to do this

If you have 100+ engineers and clear team boundaries. At that scale, microservices (with proper async boundaries and monitoring) win. One team per service is now tractable.

If your workload is truly independent batch jobs. A data pipeline that reads from S3, processes, writes to Snowflake doesn't need a monolith. Keep it as separate services/Lambda.

If your core constraint is deployment risk, not latency. A regulated financial services firm may prefer 47 independent services for auditability, even if latency suffers. Acknowledge the trade-off.

What to ship this quarter

Audit: dependency graph and latency profile of all 47 services (week 1)

Monorepo: create single repo structure with module directories (week 2)

Packwerk (or equivalent): enforce 5-8 core module boundaries (week 3-4)

Kafka topics: define async event schema for cross-module events (week 4)

One migration: consolidate 2-3 services into monolith, test latency improvement (week 5-7)

Deploy pipeline: single CI/CD, tests only changed modules (week 8-10)

Further reading

See references.md. Top picks:

Amazon Prime Video, "Scaling to 200 services" (2023). The case study that shifted the needle on distributed monoliths.

Shopify, Packwerk announcement (2021). How a 10,000-person company enforces module boundaries at scale.

Sam Newman, "Building Microservices, 2nd edition" (2021). Actually says "monolith first." The community forgot this part.