Building a Deployment Health Validator

5 Subtle Bugs That Break Production (and how to find them)

A versatile DevSecOps Engineer specialized in creating secure, scalable, and efficient systems that bridge development and operations. My expertise lies in automating complex processes, integrating AI-driven solutions, and ensuring seamless, secure delivery pipelines. With a deep understanding of cloud infrastructure, CI/CD, and cybersecurity, I thrive on solving challenges at the intersection of innovation and security, driving continuous improvement in both technology and team dynamics.

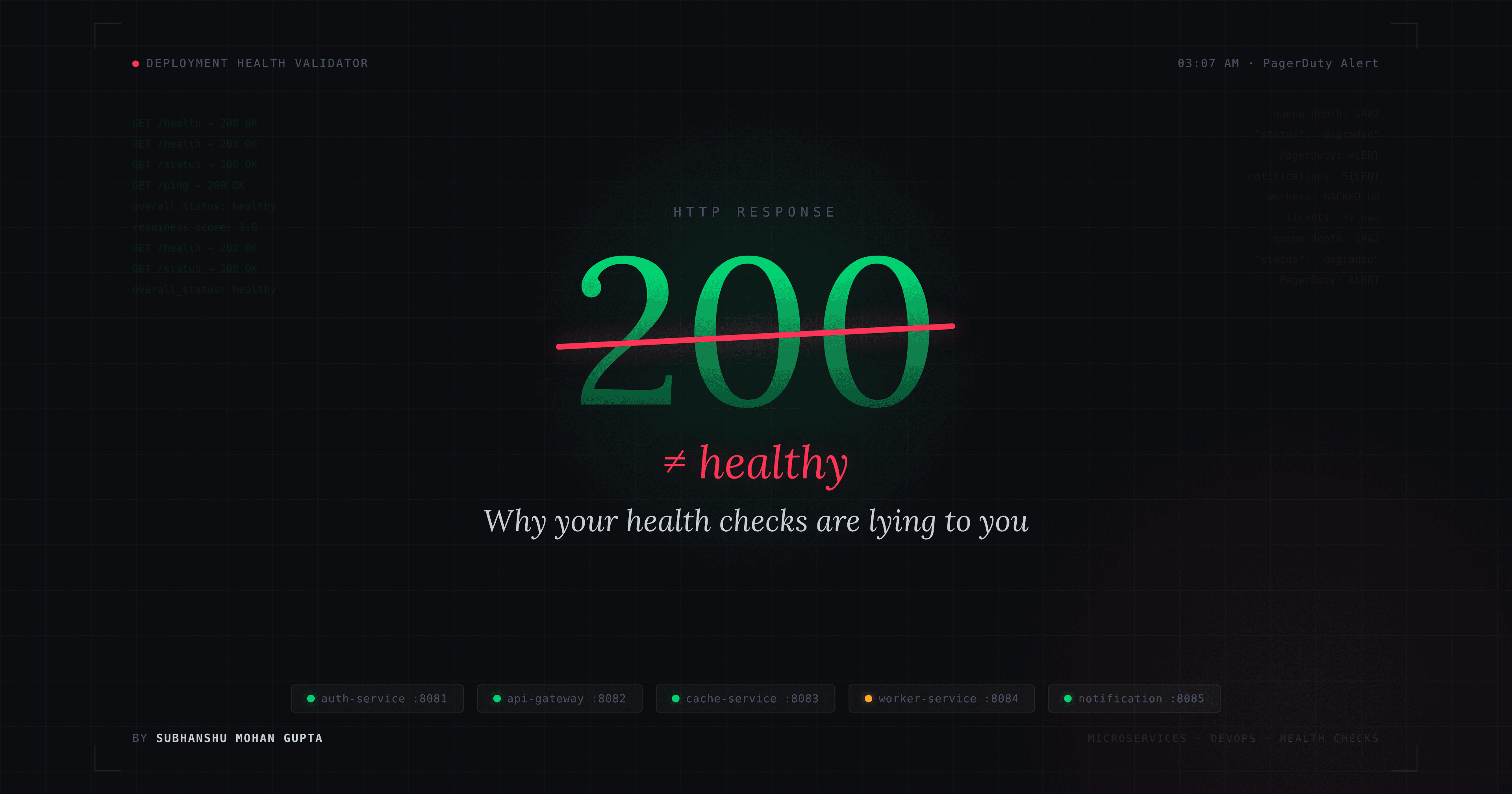

A deep-dive into microservice health checking, topological startup ordering and why HTTP 200 does not mean a service is healthy.

The Incident

Picture this: your on-call rotation fires a PagerDuty alert at 3:07 AM. The deployment pipeline says green. Every service returned HTTP 200. The readiness check passed. But your notification pipeline is completely silent, job workers are backed up with 1,482 queued tasks, and customers are already filing tickets.

You pull up the deployment validator logs. Everything looks fine on the surface. The tool reported overall_status: healthy. It was wrong on every count.

This exact scenario is what the Deployment Health Validator task is built around. Five real-world bugs, carefully placed, each one plausible enough that most automated agents (and plenty of human engineers) miss at least one. This post walks through each bug, the architecture behind the validator, and how to build one correctly from scratch.

What Are We Building?

A deployment health validator does four things:

Reads a manifest that describes your microservices (ports, health endpoints, dependencies, criticality)

Probes each service's health endpoint and parses the response body, not just the HTTP status

Computes a weighted readiness score based on how critical each service is

Runs a topological sort (Kahn's algorithm) to produce a correct startup order where every dependency starts before the services that depend on it

The output is a structured JSON report that your deployment pipeline can parse and gate on.

Architecture Overview

Production Stack (mock services)

| Service | Port | Endpoint | Response |

|---|---|---|---|

| auth-service | :8081 | /health | {"status": "ok"} |

| api-gateway | :8082 | /health | {"status": "healthy"} |

| cache-service | :8083 | /ping | "pong" (plain text!) |

| worker-service | :8084 | /status | {"status": "degraded", "queue_depth": 1482} |

| notification-service | :8085 | /health | {"status": "ok"} |

Dependency Graph

Real-World Parallel: Netflix's Hystrix, AWS Health Dashboards and Kubernetes Readiness Probes

Before diving into code, here's why this problem matters in production systems.

AWS Elastic Load Balancer will route traffic to a backend if its health check returns HTTP 200 on /health. That's it. If your app returns {"status": "starting_up"} with a 200, ELB thinks it's healthy and sends it live traffic. This exact failure mode took down a major e-commerce platform in 2019 during a Black Friday deploy.

Kubernetes readiness probes are a direct response to this problem. A pod's readiness probe checks whether it should receive traffic, separate from the liveness probe, which checks if it should be restarted. The probe can check an HTTP endpoint, but Kubernetes does not parse the body. You have to do that yourself, in your validator.

Netflix's Chaos Engineering toolkit specifically tests whether services correctly report unhealthy states under load. worker-service in this task is modelled on exactly this pattern: the service is technically "up" (HTTP 200) but degraded under load (queue_depth: 1482). A naive health checker marks it healthy. A correct one reads the body.

Project Structure

deployment-health-validator/

├── task.toml # Task metadata, timeouts, resource limits

├── instruction.md # What an agent (or engineer) must fix

├── environment/

│ ├── Dockerfile # Container definition

│ ├── deployment_manifest.yaml # Service definitions (with a decoy key)

│ ├── mock_services.py # Five Flask servers simulating endpoints

│ └── validator.py # THE BROKEN FILE

├── solution/

│ └── solve.sh # Oracle: patches all 5 bugs and runs

└── tests/

└── test_outputs.py # 19 pytest assertions

Step-by-Step Implementation Guide

Step 1: Set Up the Environment

git clone https://github.com/SubhanshuMG/terminal-bench-2-hard-devops-diagnostics.git

cd terminal-bench-2-hard-devops-diagnostics

python -m venv .venv

source .venv/bin/activate # Windows: .venv\Scripts\activate

pip install flask requests pyyaml pytest

Step 2: Write the Deployment Manifest

The manifest has one deliberate trap: a top-level services: key that contains only a legacy monitoring entry. The real services live under deployment.services. This mirrors real-world YAML configs where legacy keys accumulate over time and create ambiguity.

# deployment_manifest.yaml

# Legacy monitoring registry (NOT the authoritative list)

services:

- name: "metrics-collector"

port: 9091

health_endpoint: "/metrics"

dependencies: []

criticality: "low"

# Authoritative deployment configuration

deployment:

name: "production-stack"

services:

- name: "auth-service"

port: 8081

health_endpoint: "/health"

dependencies: []

criticality: "high"

- name: "api-gateway"

port: 8082

health_endpoint: "/health"

dependencies:

- "auth-service"

- "cache-service"

criticality: "high"

- name: "cache-service"

port: 8083

health_endpoint: "/ping" # note: NOT /health

dependencies: []

criticality: "medium"

- name: "worker-service"

port: 8084

health_endpoint: "/status" # returns HTTP 200 but body says degraded

dependencies:

- "api-gateway"

- "cache-service"

criticality: "low"

- name: "notification-service"

port: 8085

health_endpoint: "/health"

dependencies:

- "worker-service"

criticality: "low"

Step 3: Build the Mock Services

These five Flask servers simulate real microservice health endpoints. The key design choice: worker-service returns HTTP 200 with {"status": "degraded"}. Any validator that only checks the status code will silently miss this.

# mock_services.py

#!/usr/bin/env python3

import threading

from flask import Flask, jsonify

import logging

log = logging.getLogger("werkzeug")

log.setLevel(logging.ERROR)

auth_app = Flask("auth-service")

@auth_app.route("/health")

def auth_health():

return jsonify({"status": "ok", "version": "2.1.0"}), 200

gateway_app = Flask("api-gateway")

@gateway_app.route("/health")

def gateway_health():

return jsonify({"status": "healthy", "uptime_seconds": 3601}), 200

cache_app = Flask("cache-service")

@cache_app.route("/ping")

def cache_ping():

return "pong", 200 # plain text, not JSON

worker_app = Flask("worker-service")

@worker_app.route("/status")

def worker_status():

# HTTP 200 but the service is overloaded

return jsonify({"status": "degraded", "queue_depth": 1482}), 200

notif_app = Flask("notification-service")

@notif_app.route("/health")

def notif_health():

return jsonify({"status": "ok", "pending_notifications": 0}), 200

def _run(app, port):

app.run(host="0.0.0.0", port=port, debug=False, use_reloader=False)

if __name__ == "__main__":

specs = [

(auth_app, 8081, "auth-service /health"),

(gateway_app, 8082, "api-gateway /health"),

(cache_app, 8083, "cache-service /ping "),

(worker_app, 8084, "worker-service /status"),

(notif_app, 8085, "notification-service /health"),

]

threads = []

for app, port, label in specs:

t = threading.Thread(target=_run, args=(app, port), daemon=True)

t.start()

threads.append(t)

print(f" started {label} -> http://0.0.0.0:{port}")

print("All mock services running. Ctrl-C to stop.")

for t in threads:

t.join()

Start them in one terminal: python mock_services.py

Verify manually:

curl http://localhost:8081/health # {"status": "ok", "version": "2.1.0"}

curl http://localhost:8082/health # {"status": "healthy", "uptime_seconds": 3601}

curl http://localhost:8083/ping # pong

curl http://localhost:8084/status # {"status": "degraded", "queue_depth": 1482}

curl http://localhost:8085/health # {"status": "ok", "pending_notifications": 0}

First discipline: always

curlyour endpoints before writing a health checker. The field names, the endpoint paths, and the semantic meaning of a 200 response all matter.

Step 4: The Broken Validator (And All Five Bugs)

Here is validator.py as it ships in the repository, with each bug annotated:

# validator.py (BROKEN)

#!/usr/bin/env python3

import json

import yaml

import requests

from datetime import datetime, timezone

from collections import deque

def load_services(manifest_path: str) -> list:

with open(manifest_path) as f:

config = yaml.safe_load(f)

# BUG 1: reads config["services"] which is the legacy monitoring entry

# only "metrics-collector" on port 9091 is returned; none of the real services

return config["services"]

def check_health(service: dict) -> dict:

url = f"http://127.0.0.1:{service['port']}{service['health_endpoint']}"

try:

resp = requests.get(url, timeout=5)

if resp.status_code != 200:

return {"status": "unhealthy", "http_status": resp.status_code,

"criticality": service["criticality"]}

try:

body = resp.json()

# BUG 2: reads "health_status" key; no service ever sends this field

# body.get("health_status", "ok") always returns default "ok"

# worker-service sends {"status": "degraded"} but is reported healthy

state = body.get("health_status", "ok")

except ValueError:

state = "ok"

healthy = state in ("ok", "up", "healthy")

return {"status": "healthy" if healthy else "unhealthy",

"http_status": resp.status_code,

"criticality": service["criticality"]}

except requests.exceptions.RequestException:

return {"status": "unhealthy", "http_status": 0,

"criticality": service["criticality"]}

def compute_startup_order(services: list) -> list:

names = [s["name"] for s in services]

deps_map = {s["name"]: s.get("dependencies", []) for s in services}

graph = {n: [] for n in names}

in_degree = {n: 0 for n in names}

for svc, deps in deps_map.items():

for dep in deps:

# BUG 3: edges are reversed; leaf nodes get scheduled first

# should be graph[dep].append(svc) and in_degree[svc] += 1

graph[svc].append(dep)

in_degree[dep] += 1

queue = deque(n for n in names if in_degree[n] == 0)

order = []

while queue:

node = queue.popleft()

order.append(node)

for neighbor in graph[node]:

in_degree[neighbor] -= 1

if in_degree[neighbor] == 0:

queue.append(neighbor)

return order

def compute_readiness_score(services: list, statuses: dict) -> float:

# BUG 4: all weights equal 1; criticality is ignored entirely

# spec says high=3, medium=2, low=1

weight_map = {"high": 1, "medium": 1, "low": 1}

total = sum(weight_map[s["criticality"]] for s in services)

healthy = sum(weight_map[s["criticality"]]

for s in services

if statuses[s["name"]]["status"] == "healthy")

return round(healthy / total, 4) if total else 0.0

def determine_status(services: list, statuses: dict, score: float):

# BUG 5: checks ALL services instead of only high-criticality ones

# worker-service (low criticality) being unhealthy triggers "critical"

critical_ok = all(

statuses[s["name"]]["status"] == "healthy"

for s in services # should be: for s in services if s["criticality"] == "high"

)

if not critical_ok:

return "critical", critical_ok

if score >= 0.95:

return "healthy", critical_ok

if score >= 0.70:

return "degraded", critical_ok

return "not_ready", critical_ok

Breaking Down Each Bug

Bug 1: Wrong YAML Key Path

# BROKEN:

return config["services"]

# FIXED:

return config["deployment"]["services"]

The manifest has two services keys at different nesting levels. The top-level one is explicitly labelled as a "legacy monitoring registry." The validator reads the wrong one, picks up only metrics-collector, and probes port 9091 instead of the real five services.

Why it's hard to catch: The broken code runs without errors. It probes an endpoint (9091) that isn't listening, gets a connection refused, marks metrics-collector as unhealthy, and writes a report that looks structurally valid. No stack trace — just wrong data.

Bug 2: Wrong JSON Body Field Name (the hardest one)

# BROKEN:

state = body.get("health_status", "ok")

# FIXED:

state = body.get("status", "ok")

This is the most insidious bug in the set. worker-service returns {"status": "degraded", "queue_depth": 1482} with HTTP 200. The broken code reads the health_status field, which doesn't exist in any response. dict.get() returns the default "ok". The validator marks worker-service as healthy.

Real-world equivalent: An AWS Lambda function returns {"statusCode": 200, "body": "{\"error\": \"DB_TIMEOUT\"}"}. If you check response.statusCode == 200 and call it done, you miss the error in the body entirely.

Why agents miss this: The output looks correct. Four services are healthy. No exceptions are thrown. You would only catch it by curling the endpoint yourself and tracing exactly which field the code reads from the response.

| Service | Body | Field read (broken) | Result |

|---|---|---|---|

| auth-service | {"status": "ok"} |

health_status (missing) |

default "ok" → healthy |

| api-gateway | {"status": "healthy"} |

health_status (missing) |

default "ok" → healthy |

| cache-service | "pong" (not JSON) |

ValueError caught |

"ok" → healthy |

| worker-service | {"status": "degraded"} |

health_status (missing) |

default "ok" → wrongly healthy |

| notification-service | {"status": "ok"} |

health_status (missing) |

default "ok" → healthy |

Bug 3: Reversed Topological Sort

# BROKEN (edges reversed):

graph[svc].append(dep)

in_degree[dep] += 1

# FIXED (dep must start before svc):

graph[dep].append(svc)

in_degree[svc] += 1

Kahn's algorithm itself is structurally correct. The bug is in how the graph is constructed. The broken code points from a service back to its dependencies, inverting the dependency flow. Nodes with no outgoing edges (the real leaf nodes like notification-service) end up with zero in-degree and get scheduled first.

Correct startup order:

auth-service → cache-service → api-gateway → worker-service → notification-service

Broken output:

notification-service → worker-service → api-gateway → cache-service → auth-service

Real-world consequence: You start notification-service before worker-service is ready. It tries to connect, fails, and crashes. Your deployment fails not because of a bug in a service, but because of a bug in the tool that decides the order to start services.

Bug 4: All Criticality Weights Equal 1

# BROKEN:

weight_map = {"high": 1, "medium": 1, "low": 1}

# FIXED:

weight_map = {"high": 3, "medium": 2, "low": 1}

The task specification defines weights of 3/2/1 for high/medium/low criticality. With equal weights:

Broken score: 4 healthy out of 5 total = 0.8

Correct score: (3 + 3 + 2 + 1) / (3 + 3 + 2 + 1 + 1) = 9/10 = 0.9

The broken score still exceeds the 0.70 threshold and stays below 0.95, so the overall status computation might accidentally give the right answer for the wrong reason. But downstream systems relying on an accurate score for SLA calculations will get wrong numbers.

Bug 5: Critical Services Check Ignores Criticality

# BROKEN:

critical_ok = all(

statuses[s["name"]]["status"] == "healthy"

for s in services

)

# FIXED:

critical_ok = all(

statuses[s["name"]]["status"] == "healthy"

for s in services

if s["criticality"] == "high"

)

With worker-service (low criticality) being unhealthy, the broken code sets critical_services_healthy = False and returns overall_status: "critical". The correct answer is "degraded"All high-criticality services are healthy, but the readiness score (0.9) falls below the 0.95 threshold for a fully healthy deployment.

Real-world consequence: A "critical" status might trigger an automated rollback, page every on-call engineer in the org, or block a release. Triggering a critical alarm because a low-priority background worker is degraded is exactly the kind of alert fatigue that causes engineers to start ignoring pages.

Step 5: The Fixed Validator

# validator_fixed.py

#!/usr/bin/env python3

import json

import yaml

import requests

from datetime import datetime, timezone

from collections import deque

def load_services(manifest_path: str) -> list:

with open(manifest_path) as f:

config = yaml.safe_load(f)

# FIX 1: correct key path

return config["deployment"]["services"]

def check_health(service: dict) -> dict:

url = f"http://127.0.0.1:{service['port']}{service['health_endpoint']}"

try:

resp = requests.get(url, timeout=5)

if resp.status_code != 200:

return {"status": "unhealthy", "http_status": resp.status_code,

"criticality": service["criticality"]}

try:

body = resp.json()

# FIX 2: read the correct field name "status"

status_ok = body.get("status", "ok") in ("ok", "up", "healthy")

except ValueError:

status_ok = True # non-JSON (e.g. "pong"): HTTP 200 is sufficient

return {"status": "healthy" if status_ok else "unhealthy",

"http_status": resp.status_code,

"criticality": service["criticality"]}

except requests.exceptions.RequestException:

return {"status": "unhealthy", "http_status": 0,

"criticality": service["criticality"]}

def compute_startup_order(services: list) -> list:

names = [s["name"] for s in services]

deps_map = {s["name"]: s.get("dependencies", []) for s in services}

graph = {n: [] for n in names}

in_degree = {n: 0 for n in names}

for svc, deps in deps_map.items():

for dep in deps:

# FIX 3: correct edge direction; dep starts before svc

graph[dep].append(svc)

in_degree[svc] += 1

queue = deque(n for n in names if in_degree[n] == 0)

order = []

while queue:

node = queue.popleft()

order.append(node)

for neighbor in graph[node]:

in_degree[neighbor] -= 1

if in_degree[neighbor] == 0:

queue.append(neighbor)

return order

def compute_readiness_score(services: list, statuses: dict) -> float:

# FIX 4: correct criticality weights

weight_map = {"high": 3, "medium": 2, "low": 1}

total = sum(weight_map[s["criticality"]] for s in services)

healthy = sum(weight_map[s["criticality"]]

for s in services

if statuses[s["name"]]["status"] == "healthy")

return round(healthy / total, 4) if total else 0.0

def determine_status(services: list, statuses: dict, score: float):

# FIX 5: only check high-criticality services

critical_ok = all(

statuses[s["name"]]["status"] == "healthy"

for s in services

if s["criticality"] == "high"

)

if not critical_ok:

return "critical", critical_ok

if score >= 0.95:

return "healthy", critical_ok

if score >= 0.70:

return "degraded", critical_ok

return "not_ready", critical_ok

def main():

manifest_path = "/app/deployment_manifest.yaml"

output_path = "/app/deployment_report.json"

services = load_services(manifest_path)

statuses = {}

for svc in services:

result = check_health(svc)

statuses[svc["name"]] = result

print(f" {svc['name']:25s} {result['status']:10s} (HTTP {result['http_status']})")

startup_order = compute_startup_order(services)

score = compute_readiness_score(services, statuses)

overall_status, critical_ok = determine_status(services, statuses, score)

report = {

"deployment_name": "production-stack",

"overall_status": overall_status,

"readiness_score": score,

"service_statuses": statuses,

"startup_order": startup_order,

"critical_services_healthy": critical_ok,

"timestamp": datetime.now(timezone.utc).isoformat(),

}

with open(output_path, "w") as f:

json.dump(report, f, indent=2)

print(f"\nReport written to {output_path}")

print(f"Overall status : {overall_status}")

print(f"Readiness score: {score}")

if __name__ == "__main__":

main()

Step 6: Expected Output

{

"deployment_name": "production-stack",

"overall_status": "degraded",

"readiness_score": 0.9,

"service_statuses": {

"auth-service": { "status": "healthy", "http_status": 200, "criticality": "high" },

"api-gateway": { "status": "healthy", "http_status": 200, "criticality": "high" },

"cache-service": { "status": "healthy", "http_status": 200, "criticality": "medium" },

"worker-service": { "status": "unhealthy", "http_status": 200, "criticality": "low" },

"notification-service": { "status": "healthy", "http_status": 200, "criticality": "low" }

},

"startup_order": [

"auth-service",

"cache-service",

"api-gateway",

"worker-service",

"notification-service"

],

"critical_services_healthy": true,

"timestamp": "2024-01-01T00:00:00+00:00"

}

Score derivation (high=3, medium=2, low=1):

Healthy weight: auth(3) + api-gateway(3) + cache(2) + notification(1) = 9

Total weight: 9 + worker(1) = 10

Readiness score: 9/10 = 0.9

Status logic:

critical_services_healthy = true (both high services are healthy)

score = 0.9, which is < 0.95

result: "degraded"

Step 7: The Dockerfile

FROM python:3.12-slim

RUN apt-get update && \

apt-get install -y --no-install-recommends curl && \

rm -rf /var/lib/apt/lists/*

WORKDIR /app

COPY requirements.txt /app/

RUN pip install --no-cache-dir flask==3.0.3 requests==2.32.3 pyyaml==6.0.2

COPY * /app/

# Start mock services in background, wait for auth-service to be ready,

# then hold the container open for the agent or oracle to exec into.

CMD ["/bin/bash", "-c", \

"python /app/mock_services.py > /tmp/mock_services.log 2>&1 & \

until curl -sf http://localhost:8081/health > /dev/null 2>&1; do sleep 1; done && \

sleep infinity"]

Build and run:

docker build -t deployment-validator ./deployment-health-validator/environment/

docker run -it --rm deployment-validator bash

# Inside the container:

python /app/mock_services.py &

sleep 2

python /app/validator.py # or run the fixed version

cat /app/deployment_report.json

Step 8: Run the Test Suite

The 19 tests break down as follows:

| Test | Catches |

|---|---|

test_report_file_exists |

Validator ran at all |

test_report_top_level_keys |

Schema correctness |

test_deployment_name |

Bug 1 (wrong key path returns wrong name) |

test_timestamp_format |

ISO 8601 UTC format |

test_all_five_services_present |

Bug 1 (only 1 service if wrong key) |

test_auth_service_healthy |

Service probing works |

test_api_gateway_healthy |

Service probing works |

test_cache_service_healthy |

Agent read the manifest (endpoint is /ping) |

test_worker_service_unhealthy |

Bug 2 (wrong field name) |

test_notification_service_healthy |

Service probing works |

test_service_criticality_values |

Manifest parsing |

test_readiness_score |

Bug 4 (weights) |

test_critical_services_healthy |

Bug 5 (criticality filter) |

test_overall_status_degraded |

All bugs combined |

test_startup_order_has_all_services |

Bug 3 (topo sort) |

test_startup_order_auth_before_gateway |

Bug 3 |

test_startup_order_cache_before_gateway |

Bug 3 |

test_startup_order_gateway_before_worker |

Bug 3 |

test_startup_order_worker_before_notification |

Bug 3 |

Run them:

# Inside the container, after running the validator

pytest /app/tests/ -v

A passing run looks like:

PASSED tests/test_outputs.py::test_report_file_exists

PASSED tests/test_outputs.py::test_report_top_level_keys

PASSED tests/test_outputs.py::test_deployment_name

PASSED tests/test_outputs.py::test_timestamp_format

PASSED tests/test_outputs.py::test_all_five_services_present

PASSED tests/test_outputs.py::test_auth_service_healthy

PASSED tests/test_outputs.py::test_api_gateway_healthy

PASSED tests/test_outputs.py::test_cache_service_healthy

PASSED tests/test_outputs.py::test_worker_service_unhealthy

PASSED tests/test_outputs.py::test_notification_service_healthy

PASSED tests/test_outputs.py::test_service_criticality_values

PASSED tests/test_outputs.py::test_readiness_score

PASSED tests/test_outputs.py::test_critical_services_healthy

PASSED tests/test_outputs.py::test_overall_status_degraded

PASSED tests/test_outputs.py::test_startup_order_has_all_services

PASSED tests/test_outputs.py::test_startup_order_auth_before_gateway

PASSED tests/test_outputs.py::test_startup_order_cache_before_gateway

PASSED tests/test_outputs.py::test_startup_order_gateway_before_worker

PASSED tests/test_outputs.py::test_startup_order_worker_before_notification

19 passed in 0.12s

Step 9: Running as a Terminal Bench 2.0 Task

This repository is also a valid Terminal Bench 2.0 task submission. You can run it against an AI agent to measure how reliably the agent finds all five bugs.

pip install bespokelabs-harbor

export GROQ_API_KEY=<your-key>

# Verify the oracle (the task must be solvable before you can score agents against it)

harbor run -p ./deployment-health-validator -a oracle -q

# Run an agent trial; k=10 gives a statistically meaningful success rate

harbor run -p ./deployment-health-validator \

-a terminus-2 \

-m groq/moonshotai/kimi-k2-instruct-0905 \

-k 10

The Difficulty Calibration Story

Getting the task into the "hard" range (between 0% and 70% agent success) required five iterations:

| Iteration | Bug 2 Design | Agent Success |

|---|---|---|

| 1 | "healthy" missing from accepted values |

100% — too easy |

| 2 | HTTP-only check, no body parsing | 0% — too hard |

| 3 | No body check, but explicit hint about valid values | 90% — still too easy |

| 4 | No body check, vague hint about inspecting body | 0% — agents ignore it |

| 5 (final) | Wrong field name with plausible default | ~40–60% — correct range |

The key insight: a bug must produce plausible-looking output without throwing exceptions. A validator that crashes is trivially easy to fix. A validator that silently produces wrong answers is genuinely hard, because you have to know what the correct answer should be before you can spot the discrepancy.

This is the same principle behind production incident post-mortems. The hardest outages aren't the ones where something crashes; they're the ones where something runs successfully while doing the wrong thing.

Key Takeaways

HTTP status codes are not health signals. Always parse the response body and check the semantic status field. A

200 OKwith{"status": "degraded"}means the service is degraded.Read your YAML carefully. Manifest files accumulate legacy keys over time. Know which section of the config is authoritative. Comment it explicitly.

Topological sort edge direction matters. In Kahn's algorithm, an edge from A to B means A comes before B. If your dependency means "A must exist before B starts," the edge is

A → B,in_degree[B] += 1. It's easy to get this exactly backwards.Weighted scoring should reflect business priority. Equal weights mean a low-priority background worker failing counts the same as your authentication service being down. That's not true in production.

Critical service filtering should be explicit. If your alerting fires "critical" when any service is unhealthy, you'll desensitize your on-call team within a week. Be precise about which services actually matter for the critical threshold.

Test with assertions about semantic values, not just structure. Checking that

deployment_report.jsonexists is not enough. Check thatworker-service.status == "unhealthy", thatreadiness_score == 0.9, and thatstartup_order.index("auth-service") < startup_order.index("api-gateway").