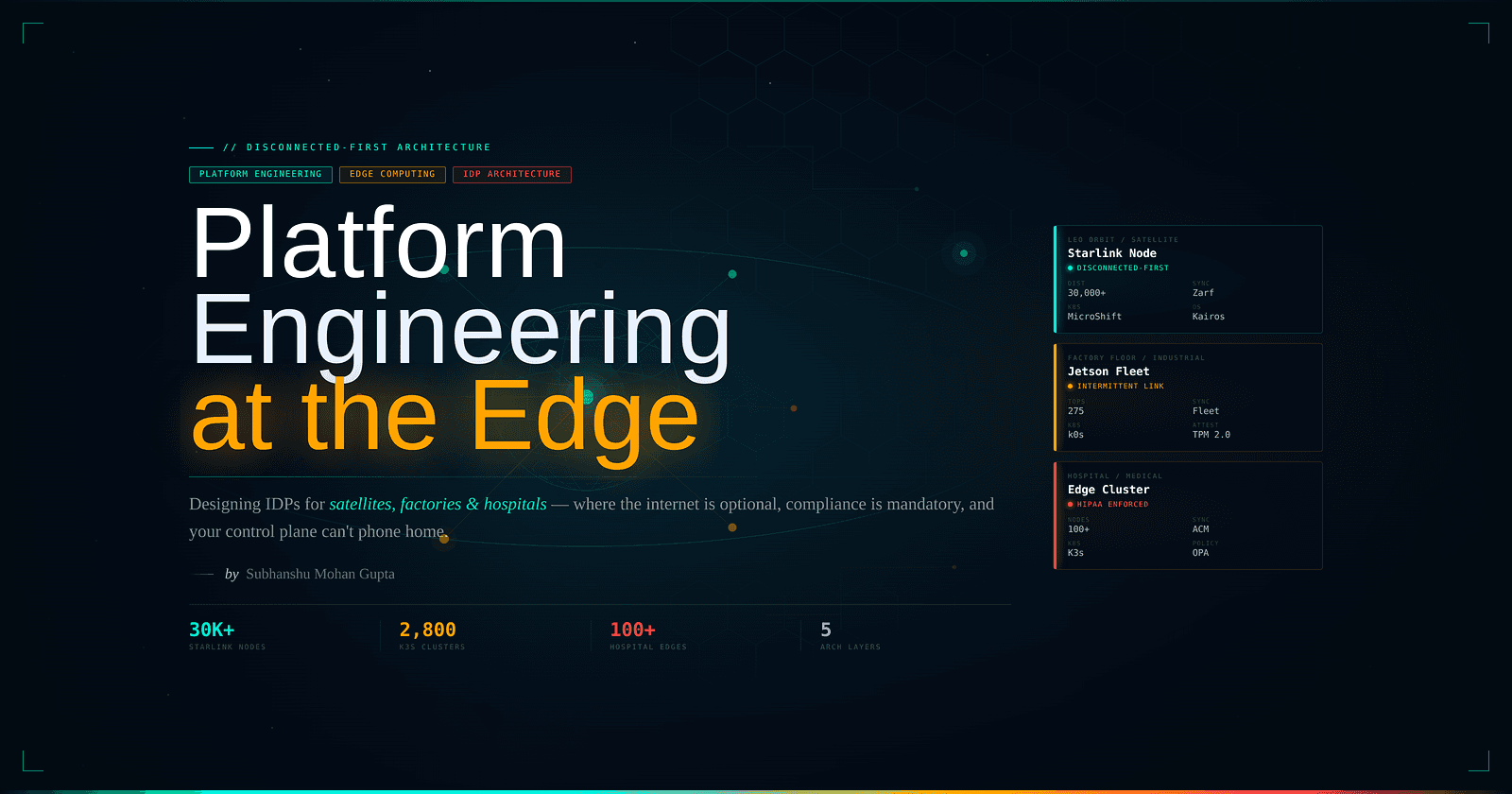

Platform Engineering at the Edge

Designing IDPs for Satellites, Factories and Hospitals

A versatile DevSecOps Engineer specialized in creating secure, scalable, and efficient systems that bridge development and operations. My expertise lies in automating complex processes, integrating AI-driven solutions, and ensuring seamless, secure delivery pipelines. With a deep understanding of cloud infrastructure, CI/CD, and cybersecurity, I thrive on solving challenges at the intersection of innovation and security, driving continuous improvement in both technology and team dynamics.

No Internal Developer Platform today supports disconnected edge environments. Backstage, Port, and Cortex all assume always-on cloud connectivity, yet organizations like GE HealthCare (100+ hospital-edge Kubernetes clusters), Chick-fil-A (2,800+ restaurant clusters), and Axiom Space (MicroShift on the International Space Station) are already running containerized workloads where the internet is a luxury. Building an IDP for the edge requires rethinking every assumption: state sync becomes eventually consistent, GitOps becomes store-and-forward, and compliance enforcement must work with zero network access. The tooling exists; K3s, Zarf, Fleet, OPA Gatekeeper, SPIFFE/SPIRE, CRDTs. Assembling them into a coherent platform demands architectural patterns fundamentally different from cloud-native defaults.

Edge Compute Is Already Running Containers at Surprising Scale

The edge is no longer a future state. SpaceX operates 30,000+ Linux nodes in orbit across the Starlink constellation, with each satellite containing roughly 60 Linux computers alongside custom ASICs from STMicroelectronics (which delivers 5+ million chips per day to SpaceX). While SpaceX hasn't confirmed containers in orbit, their ground infrastructure runs Kubernetes, Docker, Kafka, and HBase extensively, serving 9+ million users with software updates pushed to satellites weekly. The V3 satellites deliver over 1 Tbps fronthaul throughput per bird.

On factory floors, NVIDIA's Jetson Orin lineup delivers 40 to 275 TOPS of AI inference in form factors consuming 7 to 60W. The AGX Orin 64GB (2048 CUDA cores, 64 Tensor cores, 64GB LPDDR5) runs full containerized workloads via the NVIDIA Container Runtime, included in every JetPack release since 4.2.1. JetPack 6.2 (late 2024) introduced "Super Mode" doubling generative AI performance on Orin Nano/NX modules, while JetPack 7.0 (2025) brought the Jetson AGX Thor with MIG support, CUDA 13.0, and a preemptable real-time kernel on Ubuntu 24.04.

In healthcare, Advantech's POC-8 series medical panel PCs carry IEC 60601-1 certification with Intel Core i7-9700E processors and optional NVIDIA MXM GPUs, fully capable of running containers for surgical AI. GE HealthCare's Edison platform already manages 100+ Kubernetes clusters across hospital edge sites via Spectro Cloud Palette, with local OCI registries enabling air-gapped container distribution. Their architecture supports fully disconnected operation because it must; these are life-critical applications where cloud outages cannot translate to clinical failures.

Why Every Major IDP Fails at the Edge

Backstage dominates the IDP market with 89% market share across 3,400+ organizations serving 2 million developers. Its architecture, a TypeScript/Node.js backend with React frontend, PostgreSQL database, and plugin ecosystem of 200+ extensions, represents the state of the art for cloud-native developer portals. The September 2024 release of the New Backend System (1.0 stable) introduced dependency injection, and the Kubernetes plugin provides multi-cluster visibility by querying API servers directly.

Port ($60M Series C in 2024) offers a pure SaaS model with blueprint-based entity definitions and an API-first architecture. Every data ingestion flows through api.getport.io. Cortex provides self-hosted Helm charts alongside its cloud offering, with push-model Kubernetes agents and 60+ integrations. All three platforms share a fatal assumption for edge: persistent, reliable network connectivity.

| Capability | Backstage | Port | Cortex |

|---|---|---|---|

| Self-hosted | ✅ | ❌ (SaaS only) | ✅ (Helm) |

| Offline operation | ❌ | ❌ | ❌ |

| Air-gapped support | ❌ | ❌ | ❌ |

| Distributed catalog | ❌ | ❌ | ❌ |

| Store-and-forward sync | ❌ | ❌ | ❌ |

| Edge cluster scale (1000+) | ❌ | ⚠️ | ⚠️ |

Backstage's Kubernetes plugin assumes direct API server access; Mercedes-Benz filed GitHub issue #6967 requesting dynamic cluster supply because the static cluster configuration couldn't handle their growing fleet. Port's K8s exporter requires continuous outbound internet to push data. Cortex's agent model requires persistent connectivity to report state. None implement offline-capable UIs, local data caching, CRDT-based merge strategies, or async action queuing. The closest thing to an edge-native developer platform today is Spectro Cloud Palette, which offers a Local UI for field engineers and manages edge clusters in air-gapped environments, but it's an infrastructure management platform, not a full IDP.

An edge-native IDP would need a federated software catalog with local instances that operate independently and sync via CRDTs when connectivity returns, offline self-service workflows with queued actions, local golden path templates bundled with the platform, and store-and-forward telemetry that compresses and filters before transmitting. No one has built this yet.

Lightweight Kubernetes Distributions Make Disconnected Operation Possible

Three distributions compete for the edge Kubernetes space, each with distinct air-gapped strategies.

K3s (v1.33.3+k3s1) from Rancher/SUSE remains the most deployed, requiring just 512MB RAM for an agent node. Air-gapped installation uses a tarball-based approach: download the k3s-airgap-images archive and binary on a connected machine, copy them to /var/lib/rancher/k3s/agent/images/ on the target, and run INSTALL_K3S_SKIP_DOWNLOAD=true ./install.sh. K3s offers embedded SQLite (single-server) or embedded etcd (HA with 3+ servers), and its auto-deploying manifests feature applies any YAML placed in /var/lib/rancher/k3s/server/manifests/ automatically, including CRDs. Version 1.33.1 added conditional image import via .cache.json to skip re-importing unchanged tarballs on restart.

MicroShift (4.17) from Red Hat is OpenShift stripped to its essentials: 2 cores, 2GB RAM, single-node only, with OVN-Kubernetes networking and LVMS storage. Its killer feature for edge is deep RHEL for Edge integration; ostree-based immutable OS images built with composer-cli, with Greenboot health checks that automatically roll back failed updates by rebooting into the previous known-good ostree commit. Everything bakes into a single ISO installable from USB, including all RPM packages, container images, and MicroShift itself. For disconnected environments, combine a mirror registry with a local RPM mirror via reposync or Red Hat Satellite.

k0s (v1.34.3+k0s.0) from Mirantis pushes resource minimalism further: 0.5GB RAM for a worker. It's a single static binary embedding containerd, runc, kubectl, and CNI plugins with zero host OS dependencies. Uniquely, k0s supports RISC-V alongside x86_64, ARM64, and ARMv7. Its Autopilot operator handles automated rolling updates via Plan and UpdateConfig CRDs, with safety checks verifying all controllers report /ready before proceeding. Air-gapped bundles are generated with k0s airgap bundle-artifacts, and spec.images.default_pull_policy: Never prevents any internet pulls.

# k0s air-gapped deployment via k0sctl

apiVersion: k0sctl.k0sproject.io/v1beta1

kind: ClusterConfig

spec:

k0s:

version: v1.34.3+k0s.0

hosts:

- role: controller

ssh:

address: 10.0.0.1

uploadBinary: true

k0sBinaryPath: ./k0s

- role: worker

ssh:

address: 10.0.0.2

uploadBinary: true

files:

- src: ./airgap-bundle-amd64.tar

dstDir: /var/lib/k0s/images

GitOps Breaks Gracefully, But Edge Needs Store-and-Forward

Standard GitOps tools degrade predictably when disconnected. Flux CD (v2.8.1) continues enforcing the last-known-good state; the kustomize-controller and helm-controller keep reconciling from the last successfully fetched artifact while the source-controller logs FetchFailed and retries at the configured interval. Changes committed to Git while disconnected simply aren't picked up until reconnection. ArgoCD (v3.1) behaves similarly in push mode: applications targeting unreachable clusters show Unknown status, but existing workloads continue running. Neither tool offers offline queueing or store-and-forward.

Three solutions address this gap directly.

Zarf (v0.71.1, by Defense Unicorns) solves air-gapped GitOps through physical transport. A zarf.yaml declares all needed artifacts, including container images, Helm charts, Kubernetes manifests, and Git repositories. Then zarf package create bundles everything into a single compressed .tar.zst tarball. On the disconnected target, zarf init bootstraps a K3s cluster (optional), deploys an in-cluster OCI registry, and installs a mutating webhook (zarf-agent) that automatically rewrites all image references to point to the local registry. The init package uses an ingenious bootstrap: the registry image is split into 512KB chunks stored as ConfigMaps (fitting etcd's 1MB limit), then a statically compiled Rust binary reassembles them. Zarf also deploys Gitea as an in-cluster Git server, enabling full GitOps workflows entirely offline.

Fleet by Rancher uses a pull-based architecture designed for up to 1 million clusters. The Fleet Agent on each downstream cluster initiates outbound connections to the management cluster, with no inbound access required. Git content is compiled into Bundles on the management cluster, and agents fetch BundleDeployments when connectivity permits. With correctDrift.enabled: true, Fleet automatically reconciles any drift. This pull model naturally handles intermittent connectivity: when offline, existing deployments persist; when reconnected, the agent pulls pending updates.

Red Hat ACM (2.12.x) provides a hub-spoke model where the klusterlet work-agent on managed clusters periodically checks for ManifestWork resources, applies them locally, and reports status back. When a spoke loses connectivity, ManagedClusterConditionAvailable transitions to Unknown, but all existing ManifestWorks remain enforced. ACM's integration with OpenShift GitOps enables Zero Touch Provisioning (ZTP) for MicroShift clusters from Git-stored SiteConfig and PolicyGenTemplate CRDs.

The ArgoCD Agent project (argoproj-labs) represents the newest approach: a lightweight pull-mode agent where edge clusters initiate connections back to the hub, eliminating the need for any inbound network access to edge environments. Currently pre-GA but architecturally significant for edge at scale.

Compliance Enforcement Works Offline (Regulations Don't Care About Your Network)

OPA Gatekeeper (v3.21.0) enforces admission control entirely locally. Policies stored as ConstraintTemplate and Constraint CRDs live in the cluster's etcd; the validating webhook intercepts API requests at the kube-apiserver, evaluates Rego policies in-process, and returns allow/deny decisions with zero external network calls. For distributing policy updates to edge nodes, bundle Rego policies into .tar.gz files, transport via physical media, and serve from a local HTTP server. OPA supports cryptographic bundle signing via JWT verification, ensuring policy integrity on untrusted nodes.

SBOM verification without network is fully supported across the major tools. Syft generates SBOMs offline against local images. Grype scans offline by pre-downloading the vulnerability database from toolbox-data.anchore.io and hosting it locally. Trivy copies its databases to a private registry via ORAS CLI and runs with TRIVY_OFFLINE_SCAN=true. Cosign (v2.4.1) verifies signatures offline using --offline --local-image, with stapled inclusion proofs eliminating the need to contact the Rekor transparency log. The CNCF Notation project (v1.1.0) supports signing images on local disk and integrates with Ratify for Kubernetes admission verification through Gatekeeper.

The regulatory landscape varies dramatically by sector.

HIPAA (45 CFR §164.312) requires encryption at rest (etcd encryption via KMS provider, LUKS for PVs), encryption in transit (TLS 1.2+ for all API communication, mTLS via service mesh), comprehensive audit logging, RBAC with unique user IDs, and automatic logoff. PHI must be encrypted at every location it exists, including containers, volumes, host filesystems, and log aggregators.

FDA 21 CFR Part 11 (updated guidance October 2024) mandates computer-generated audit trails recording operator identity and timestamps for all create/modify/delete actions, which cannot be altered. Electronic signatures require at least two identification components. Immutable container images directly support Part 11's integrity requirements, and GitOps workflows provide version-controlled, auditable deployment histories. Edge devices must maintain audit logs locally during disconnected periods.

IEC 62443 for industrial systems defines Security Levels 1 through 4 (from accidental misuse through nation-state threats) and seven Foundational Requirements including identification/authentication, restricted data flow, and system integrity. The zones-and-conduits model maps directly to Kubernetes Network Policies (zone boundaries) and ingress controllers (conduit controls). The 2024 update to IEC 62443-4-1 now explicitly requires SBOMs from component suppliers.

FCC Part 25 for satellites focuses on spectrum management and orbital debris, with no compute-specific requirements. The FCC proposed replacing Part 25 with new "Part 100" rules in October 2025. ITAR/EAR export controls are the real concern: the October 2024 landmark reform created the first-ever ITAR definition of "spacecraft" and new license exceptions for allied nations, but high-performance processors (10+ TFLOPS) and imaging sensors (<30cm resolution) remain controlled.

Real Deployments That Prove the Architecture Works

The most instructive case studies span all three target environments.

Axiom Space launched MicroShift to the ISS in August 2024 aboard SpaceX CRS-33, running Red Hat Device Edge on the AxDCU-1 data center unit. This represents the first Kubernetes-based containerized computing in orbit, with delta updates minimizing bandwidth consumption and automated rollback for self-healing. The system runs AI/ML workloads for supervised autonomy and life sciences research, reducing dependence on costly satellite downlinks.

Loft Orbital raised €170M Series C in January 2025 and operates a "virtual missions" model, where customers deploy software to already-orbiting YAM satellites without building hardware. Their YAM-9 satellite demonstrated the first commercial four-node heterogeneous compute cluster in orbit. Customers like Helsing run real-time AI for RF signal intelligence, while SkyServe runs wildfire tracking. The CNCF KubeEdge project demonstrated on-orbit target identification accuracy improvements of over 50% on Chinese satellites using a lightweight Sedna AI inference model.

Chick-fil-A operates approximately 2,800 K3s clusters, one per restaurant, on Intel NUC hardware (3 nodes, ~8GB RAM each, ~$1,000 per site). Their custom "Vessel" agent clones a per-restaurant Git repository and applies manifests via kubectl apply. The system processes billions of MQTT messages monthly from IoT devices (fryers, grills, tablets). Chief Architect Brian Chambers emphasizes that eventual consistency is "the only viable model for edge"; not all clusters are identical at any time, but all converge on a golden image over days to weeks.

The Home Depot runs 2,300+ K3s clusters managed by SUSE Rancher, processing 5.5 billion documents monthly with 4-hour chain-wide deployments across 6,900+ machines. Distinguished Engineer Dillon TenBrink's eight lessons from KubeCon 2025 highlight that storage at the edge remains the hardest unsolved problem ("I personally would have no storage at the edge if possible") and that eventual consistency must be embraced rather than fought.

DoD Platform One's Big Bang deploys across cloud, air-gapped, and classified environments using ArgoCD for GitOps with Iron Bank hardened container images. Defense Unicorns' Zarf powers air-gapped delivery for submarines and classified networks. The U-2 spy plane received new AI/ML container software deployed in just 12 days, with over-the-air container updates decoupled from airworthiness hardware certification.

Architecture Patterns for a Disconnected World

The theoretical foundation for edge state synchronization comes from a seminal 2021 paper, "Rearchitecting Kubernetes for the Edge" (Jeffery, Howard, and Mortier, EdgeSys '21), which proposes replacing etcd's Raft consensus with CRDT-based eventually consistent storage. Their analysis shows etcd writes constitute ~30% of Kubernetes API requests, and strong consistency becomes a bottleneck at edge scale. A CRDT-based datastore exposes the same etcd API but allows reads/writes to any single node without coordination, with lazy background sync resolving conflicts via mathematical merge functions. Follow-up work by Sassi, Jensen, and Mortier (March 2025) tested these concepts using emulated edge clusters.

For practical state synchronization, NATS (v2.10+, CNCF Incubating) provides the most edge-optimized message broker. Its Leaf Node pattern runs a local NATS server on each edge device that syncs with the cloud cluster on reconnection. Sensor data persists to a local JetStream stream; on connectivity return, the leaf node syncs automatically in order without duplication. NATS is a single 15MB binary with no external dependencies. Volvo and Schaeffler use it in production for fleet management.

SPIFFE/SPIRE (CNCF Graduated) solves workload identity at the edge through nested topology. A top-level SPIRE Server holds the root CA, while downstream SPIRE Servers at edge sites obtain intermediate CAs via the Workload API. Critically, SVID verification is entirely local; workloads verify peer identities using cached trust bundles with zero network calls. During disconnection, the edge SPIRE Server continues issuing SVIDs using its cached intermediate CA until the configurable TTL expires.

Keylime (CNCF) enables cryptographic attestation of untrusted edge nodes via TPM 2.0. Before deploying workloads, the Verifier validates measured boot PCR values and IMA (Integrity Measurement Architecture) runtime measurements against golden values. A 2024 ICCCN paper demonstrated integration with Kubernetes through a custom EdgeNode CRD where an Attestation Controller adjusts RBAC permissions based on attestation events; nodes that fail attestation automatically lose the ability to receive workloads.

Crossplane (v1.20, CNCF Graduated October 2025) extends edge infrastructure management through Composite Resource Definitions that abstract complexity. The hub-and-spoke pattern runs Crossplane centrally to provision edge clusters, then uses provider-helm to install Crossplane into each new cluster with bundled Configuration packages, giving you full management of Crossplane clusters using Crossplane itself. Spectro Cloud's provider-palette adds edge-native host provisioning and Cluster Profiles to the Crossplane ecosystem.

Kairos (CNCF Sandbox, v3.5.0 stable) delivers the immutable OS layer: the operating system IS the container image, distributed via OCI registries. Building custom OS images requires nothing more than a Dockerfile. A/B atomic upgrades push new images to a registry, and nodes upgrade safely with automatic rollback on failure. Optional P2P mesh networking via libp2p/EdgeVPN enables automated node discovery across networks spanning up to 10,000 km.

Testing Disconnected Edge Requires Simulating Disconnection

Chaos Mesh (v2.6+, CNCF Incubating) provides the most comprehensive network partition testing for edge scenarios. Its NetworkChaos CRD supports partition, delay, packet loss, duplication, reordering, and bandwidth limiting:

apiVersion: chaos-mesh.org/v1alpha1

kind: NetworkChaos

metadata:

name: edge-hub-partition

spec:

action: partition

mode: all

selector:

namespaces: [edge-workloads]

labelSelectors:

app: edge-service

direction: both

target:

selector:

namespaces: [kube-system]

duration: '30m'

LitmusChaos (v3.0+, CNCF Incubating) adds a 2025 innovation: an MCP Server that exposes chaos capabilities via the Model Context Protocol, allowing engineers to trigger experiments from AI assistants using natural language. Its probe system, including HTTP, command, Kubernetes, and Prometheus, validates steady-state hypotheses at each experiment phase.

For compliance validation in air-gapped environments, kube-bench runs as a standalone Go binary executing CIS Kubernetes Benchmark checks with embedded definitions, no network required. The OpenShift Compliance Operator runs OpenSCAP evaluations via privileged pods with host read access, generating ComplianceRemediation CRDs that can automatically apply fixes. Both tools produce JSON/JUnit output storable locally and exportable via store-and-forward when connectivity returns.

A comprehensive edge testing pipeline should provision multi-cluster environments with k3d (~5 second startup, ~350MB idle per cluster), deploy applications via GitOps, run compliance scans, then systematically inject network partitions to verify autonomous operation, state convergence on reconnection, and data integrity throughout.

The Platform Engineering Gap at the Edge

The tools for edge Kubernetes are mature. K3s, MicroShift, and k0s run reliably on constrained hardware. Zarf solves air-gapped delivery. Fleet and ACM handle multi-cluster GitOps at scale. OPA Gatekeeper enforces policy locally. SPIFFE/SPIRE provides identity without connectivity. Keylime attests node integrity via hardware roots of trust. The missing piece is the integration layer; a coherent Internal Developer Platform that stitches these components together with a federated catalog, offline self-service workflows, and eventually consistent state synchronization.

Three architectural principles emerge from real-world deployments. First, eventual consistency is not a compromise but a requirement; both Chick-fil-A and Home Depot explicitly adopted it after failing with stronger consistency models. Second, the pull model wins for edge GitOps; Fleet, argocd-agent, and ACM's klusterlet all have agents initiating outbound connections, eliminating inbound network requirements. Third, immutability at every layer prevents the configuration drift that makes disconnected environments unmanageable: immutable OS (Kairos/ostree), immutable container images, immutable infrastructure-as-code, and signed, immutable deployment packages (Zarf).

The organization that builds a true edge-native IDP with CRDT-synchronized catalogs, TPM-attested node enrollment, offline golden paths, and compliance-as-code that works without network, will unlock platform engineering for the 75% of enterprise data that Gartner predicts will be processed outside traditional data centers by 2027. The satellites, factories, and hospitals aren't waiting.