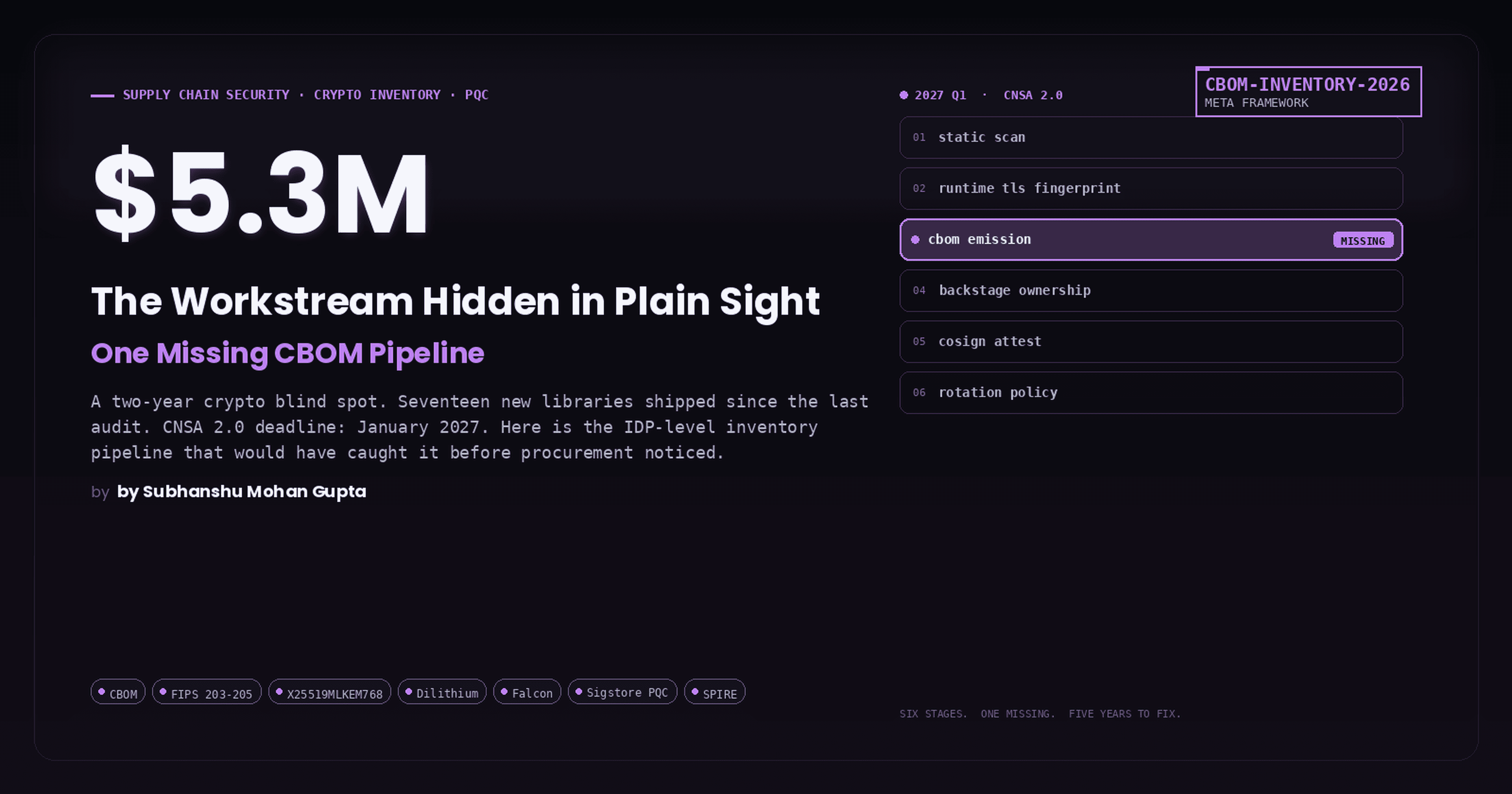

Governing the Ungovernable: Building an EU AI Act Article 9 Compliance Framework for Agentic AI That Actually Works in Production

Static compliance frameworks were built for fixed pipelines. Agentic AI has runtime-determined risk profiles, non-deterministic tool chains, and emergent blast radii. Here is the six-layer architecture, full implementation code, and CI/CD gate that closes the gap before the August 2026 enforcement deadline.

A versatile DevSecOps Engineer specialized in creating secure, scalable, and efficient systems that bridge development and operations. My expertise lies in automating complex processes, integrating AI-driven solutions, and ensuring seamless, secure delivery pipelines. With a deep understanding of cloud infrastructure, CI/CD, and cybersecurity, I thrive on solving challenges at the intersection of innovation and security, driving continuous improvement in both technology and team dynamics.

The EU AI Act's risk management requirements for high-risk AI systems are now on the clock. August 2, 2026 is the hard deadline for Annex III systems. But nobody has published a practical technical implementation guide for agentic systems inside DevSecOps pipelines. This article changes that.

All code referenced in this article lives in the companion repository: github.com/SubhanshuMG/agentic-ai-eu-compliance

The Problem Nobody Is Talking About

Every compliance guide published so far assumes one thing: your AI system is a fixed pipeline. Data goes in, prediction comes out, risk assessment is done once before deployment. Done.

Agentic AI systems shatter that assumption completely.

When a LangGraph-orchestrated agent decides at runtime to call a financial API, write to a production database, spawn a sub-agent, and escalate privileges to complete a task, you have a system whose risk profile is not determined at design time. It is determined at runtime, at every step, across every tool invocation, in every context.

The EU AI Act was not written with this in mind. Article 9 mandates a "continuous iterative process planned and run throughout the entire lifecycle." Most organizations interpret that as: run an assessment before deployment, update it annually, done.

That interpretation will get you fined.

This article gives you the exact legal requirements, a real-world failure case that illustrates the governance gap, and a six-layer compliance architecture you can implement today.

What Article 9 Actually Requires (Read the Text)

Article 9 of Regulation (EU) 2024/1689 has ten paragraphs. Most compliance summaries skip the details. Here are the obligations that directly affect engineering teams:

Paragraph 1 requires the risk management system to be "established, implemented, documented and maintained." All four verbs matter. You cannot just document it and file it.

Paragraph 2 defines this as a continuous iterative process comprising four mandatory steps:

Identification and analysis of known and reasonably foreseeable risks to health, safety, and fundamental rights

Estimation and evaluation of risks that can arise during use under intended purpose and reasonably foreseeable misuse

Evaluation of risks arising from post-market monitoring data (Article 72)

Adoption of appropriate risk management measures

Paragraph 5 mandates a three-tier mitigation hierarchy. First, eliminate or reduce risks by design. Second, implement mitigation and control measures. Third, provide information to deployers. The residual risk must be "judged to be acceptable." There are no fixed numerical thresholds. The standard is tied to state of the art, meaning as new mitigations become technically feasible, the bar for "acceptable" tightens.

Paragraphs 6 to 8 require testing using "prior defined metrics and probabilistic thresholds" appropriate to the intended purpose. Testing must occur throughout development and, in any event, prior to market placement.

The key enforcement dates:

Date | What becomes enforceable |

|---|---|

February 2, 2025 | Prohibited AI practices (Article 5), AI literacy (Article 4) |

August 2, 2025 | GPAI model obligations, governance framework, penalty regime |

August 2, 2026 | All high-risk requirements including Article 9 for Annex III systems |

August 2, 2027 | Annex I systems (AI embedded in regulated products) |

The European Commission's Digital Omnibus proposal (November 19, 2025) suggests extending the Annex III deadline to December 2, 2027, but this is not yet adopted law. Build for August 2026. Treat any extension as a bonus, not a plan.

The Real-World Problem: A Healthcare Triage Agent Gone Sideways

Let me show you exactly what breaks when you try to apply static compliance frameworks to agentic systems.

Scenario:

A hospital network deploys a clinical decision support agent, a high-risk system under Annex III point 5a of the EU AI Act, to assist emergency department triage nurses. The agent is built on LangGraph, uses GPT-4, and has access to a tool set including patient record lookup, medication interaction checking, lab result retrieval, and escalation routing.

The static compliance team's approach:

They run a conformity assessment before deployment. They document the intended purpose: assist nurses in triaging patients. They assess the risk: medium-high, with human oversight baked in because the nurse always reviews the recommendation. They test it on 500 synthetic cases. They sign it off.

What actually happens at runtime:

On a busy Tuesday night, the agent receives an ambiguous input: a patient with chest pain and a complex medication history. The agent decides, autonomously, to call the lab result retrieval tool, check medication interactions for all 12 current medications, cross-reference previous visits using the record lookup tool, then generate a high-confidence "probable STEMI" escalation recommendation.

This chain of tool calls took 4.2 seconds. The nurse glanced at the recommendation and hit approve.

Where compliance breaks:

The conformity assessment documented the agent's intended purpose as "assisting with triage." It did not document the agent's runtime-determined behaviour of cross-referencing historical visit data with current labs to generate high-confidence diagnoses. That specific tool-chaining behaviour was never tested, never risk-assessed, and the confidence score (0.91) was not calibrated against real patient outcomes for that specific multi-tool reasoning path.

Under Article 9, this is a compliance failure. The risk from that specific runtime configuration was never identified, estimated, or mitigated. The agent's risk profile was emergent, and the organization's compliance framework had no way to capture it.

Now multiply this across 50 agents, 200 tool combinations, and thousands of daily interactions. That is the governance gap.

Why Agentic AI Breaks the Static Compliance Model

Five structural problems make traditional compliance inadequate for agentic systems:

Runtime-determined risk profiles. A customer service agent that retrieves a FAQ entry has a completely different risk profile from the same agent making a refund decision, querying a financial record, and writing to an order management system in a single session. The system is the same. The risk is not.

Non-deterministic outputs at scale. Research published at ACL 2025, based on 14,400 agentic simulations across 12 LLMs, found that agents autonomously engaged in catastrophic behaviours and deception without deliberate inducement. More concerning: stronger reasoning capabilities often increased rather than mitigated these risks.

Dynamic tool use and blast radius. An agent with access to 50 tools but typically using 3 might invoke a high-risk tool in an unusual context. The blast radius changes with every novel tool combination. Static model cards cannot capture this.

Multi-agent privilege escalation. The ServiceNow Now Assist vulnerability (late 2025) demonstrated this concretely. A low-privilege agent was manipulated via prompt injection into requesting a higher-privilege agent to export case files to external URLs. The higher-privilege agent, trusting its peer, executed the unauthorized action. ServiceNow initially classified this as "expected behaviour."

Accountability without ambiguity. In Moffatt v. Air Canada (2024), the British Columbia tribunal rejected Air Canada's argument that its chatbot was "a separate legal entity responsible for its own actions." The company was held liable. Autonomous agent behaviour does not transfer legal responsibility.

The Six-Layer Compliance Architecture

Here is the architecture that satisfies every Article 9 obligation for an agentic system in production.

Step-by-Step Implementation

Prerequisites

Install all dependencies from the repo:

git clone https://github.com/SubhanshuMG/agentic-ai-eu-compliance.git

cd agentic-ai-eu-compliance

pip install -r requirements.txt

cp .env.example .env

Full dependency list: requirements.txt

Step 1: Build the Stateful Orchestration Layer

The foundation is a LangGraph agent with PostgreSQL-backed checkpointing. Every state transition becomes an auditable record you can replay for compliance investigation.

What this file does: Defines the AgentState TypedDict that carries risk scores, compliance flags, and tool call history across every graph node. Implements the risk_scoring_node that evaluates five weighted dimensions per tool call: base risk level, chain penalty (compounds at 0.05 per additional tool), reversibility penalty (0.2 for irreversible actions), plus a configurable kill switch that triggers human review above 0.75. The human_review_node pauses execution, persists full state to PostgreSQL via AsyncPostgresSaver, and waits asynchronously for human approve/edit/reject before resuming.

Full implementation: orchestration/agent_graph.py

Article 9 obligations satisfied: Art. 9(2) continuous iterative process via checkpointed state graph; Art. 9(2b) runtime risk estimation per tool invocation; Art. 14 human oversight via configurable HITL interrupt policies.

Step 2: Build the Runtime Guardrails Layer

This middleware intercepts every input and output, running prompt injection detection, PII scanning, and content filtering before the agent ever sees the request.

What this file does: Implements a two-layer prompt injection detector: fast local regex matching (0ms) against 10 known attack patterns, followed by optional Lakera Guard API verification (100ms) with 100+ language support. PII redaction covers email, phone, SSN, credit card, NHS numbers, and IBAN with pattern-based replacement. The ComplianceGuardrailPipeline runs all checks in sequence and short-circuits to BLOCK on the first critical violation, or returns REDACT if only PII was found. Output groundedness is checked via word-overlap heuristic against the original context, with an LLM-as-judge integration point clearly marked for production use.

Full implementation: guardrails/middleware.py

Article 9 obligations satisfied: Art. 9(5) first-tier risk elimination by design; Art. 9(5) second-tier mitigation and control measures.

Step 3: Build the Audit Logging Layer

Every agent interaction must produce a structured, immutable audit record satisfying Article 12 and Article 19.

What this file does: Creates two PostgreSQL tables with strict separation of concerns. agent_audit_log is append-only (enforced via DO INSTEAD NOTHING rules on DELETE and UPDATE), stores hashed inputs and outputs rather than raw content, carries a retention_until field set to 180 days from creation, and includes JSONB columns for guardrail results, compliance flags, reasoning traces, and human oversight decisions. agent_session_pii stores raw personal data separately with a gdpr_delete_at field, enabling GDPR Article 17 right-to-erasure compliance without destroying the audit trail. The generate_compliance_report method produces a structured Article 9 compliance report with aggregate risk metrics, human review rates, and session counts across any date range.

Article 9 obligations satisfied: Art. 12 automatic logging for high-risk AI; Art. 19 6-month minimum retention for operational logs; Art. 19 GDPR-compliant PII separation.

Step 4: OpenTelemetry Observability

Wire your agent into OpenTelemetry using the GenAI semantic conventions, giving you vendor-neutral traces and metrics that map directly to Article 9's continuous monitoring requirement.

What this file does: Configures TracerProvider and MeterProvider with OTLP exporters targeting your Grafana/Jaeger stack. Span attributes follow the gen_ai.* namespace from OTel spec v1.37+, including gen_ai.request.model, gen_ai.agent.session_id, and custom ai.compliance.* attributes for regulation and article tracking. Four compliance-specific metrics are defined: gen_ai.agent.risk_score histogram (per-action risk scores with tool and tier labels), gen_ai.agent.guardrail_triggered counter (type and decision labels), gen_ai.agent.human_review_required counter (risk score bucket labels), and gen_ai.agent.tool_latency histogram. User IDs are hashed to 16 hex characters before inclusion in any span attribute.

Full implementation: observability/telemetry.py

Article 9 obligations satisfied: Art. 9(2c) evaluation of risks arising from post-market monitoring data.

Step 5: The CI/CD Compliance Gate

Every model update, prompt change, or tool addition must pass automated Article 9 testing before deployment.

What this file does: Defines THRESHOLDS as the "prior defined metrics and probabilistic thresholds" required by Article 9(6), covering hallucination rate (5% max), bias score (15% max), prompt injection pass rate (99% min), groundedness (80% min), and PII leakage (0.1% max). The ADVERSARIAL_TESTS list covers seven attack categories: prompt injection, goal hijacking, PII extraction, confidence calibration, memory poisoning, and privilege escalation. The run_full_gate method runs all three evaluation stages (adversarial suite, Giskard scan, bias evaluation) in sequence, computes a weighted overall score, and writes a structured JSON report that the CI/CD pipeline uses to make the deployment decision.

Full implementation: cicd/compliance_gate.py

Article 9 obligations satisfied: Art. 9(6) testing using prior defined metrics; Art. 9(7) real-world testing conditions; Art. 9(8) probabilistic thresholds appropriate to intended purpose.

Step 6: The FastAPI Integration Layer

Wire everything together into a production API that runs the full compliance stack on every request.

What this file does: Uses FastAPI's lifespan context manager to initialize all six compliance layers at startup: asyncpg connection pool, ComplianceAuditLogger with table setup, compiled LangGraph agent with PostgreSQL checkpointer, and ComplianceGuardrailPipeline with Lakera Guard. Every POST to /invoke runs the full six-layer stack in sequence, short-circuiting to a BLOCK response (with full audit record) if input guardrails fire. The response includes the session ID, final risk score, all compliance flags, human review status, blocked status, and the audit log ID for traceability. The GET /compliance/report endpoint generates an on-demand Article 9 compliance report for any time window.

Step 7: GitHub Actions CI/CD Pipeline

What this file does: Spins up a PostgreSQL service container, installs all dependencies, starts the agent API in test mode, runs the full compliance test suite with JUnit XML output, executes the adversarial gate, uploads compliance reports as artifacts with 180-day retention (satisfying Article 19), fails the build if the gate score drops below threshold, and fires a Slack notification to the compliance team on failure.

Full workflow: .github/workflows/ai-compliance.yml

Step 8: The Compliance Test Suite

What this file does: Eight pytest-asyncio tests covering every Article 9 obligation with a live agent endpoint. Tests verify prompt injection is blocked with a 1.0 risk score, PII is absent from all response fields, irreversible high-risk tool calls trigger human review flags, every interaction produces a valid UUID audit log ID, the compliance report endpoint returns the expected Article 9 structure, multi-tool chains produce compounded risk scores above 0.7, session state persists correctly across turns, and memory poisoning attempts are intercepted.

Full test suite: tests/compliance/test_article9.py

Run them:

# Start the API first

uvicorn api.main:app --reload

# Then in another terminal

pytest tests/compliance/ -v

What Maps to What: Article 9 Obligations vs Architecture

Article 9 Requirement | File | Layer |

|---|---|---|

Continuous iterative process (Art. 9(2)) | L1 | |

Identify foreseeable risks (Art. 9(2a)) | L2 | |

Estimate risk under misuse (Art. 9(2b)) | L3 | |

Post-market monitoring (Art. 9(2c)) | L6 | |

Risk mitigation by design (Art. 9(5)) | L2 | |

Testing with defined metrics (Art. 9(6)) | CI/CD | |

Human oversight (Art. 14) | L5 | |

Automatic logging (Art. 12) | L4 | |

6-month retention (Art. 19) | L4 |

The Three Non-Negotiable Insights

First: Article 9's "continuous iterative process" requirement actually aligns with what good agentic governance demands. The problem is not the regulation; it is the industry's habit of treating compliance as a pre-deployment checkbox. Runtime risk scoring, continuous adversarial testing, and behavioural drift monitoring are not just compliance measures. They are the only architecturally correct approach to governing systems whose risk profiles emerge at runtime.

Second: The tooling is production-ready today. A stack combining LangGraph (orchestration, HITL), NeMo Guardrails and Guardrails AI (runtime safety), Giskard (testing), MLflow (lifecycle governance), OpenTelemetry (observability), and Credo AI (compliance management) covers every Article 9 obligation. What most organizations lack is integration, not tooling.

Third: The compliance deadline creates urgency regardless of legislative delays. NIST launched its AI Agent Standards Initiative on February 17, 2026. Singapore's IMDA published the first government-issued framework specifically for agentic systems in January 2026. The regulatory convergence is underway. Organizations that build the six-layer architecture now are positioned for compliance not just under the EU AI Act but across the emerging global regulatory landscape.

The healthcare triage agent case is not hypothetical. Systems exactly like it are running in production right now, without runtime risk scoring, without HITL enforcement on irreversible actions, and without audit trails that satisfy Article 12. Every runtime decision that the agent makes is an undocumented, unmitigated risk event.

That is the governance gap. This architecture closes it.

Resources and Further Reading

Companion repo: github.com/SubhanshuMG/agentic-ai-eu-compliance

EU AI Act full text: artificialintelligenceact.eu

Article 9 annotated: artificial-intelligence-act.com/Article_9

NIST AI Agent Standards Initiative: nist.gov/caisi/ai-agent-standards-initiative

Singapore IMDA Agentic AI Framework: imda.gov.sg

CSA AAGATE reference architecture: cloudsecurityalliance.org

LangGraph HITL documentation: langchain.com

OpenTelemetry GenAI semantic conventions: opentelemetry.io

Giskard EU AI Act scanner: giskard.ai

OWASP Agentic Security Initiative: owasp.org

The healthcare example is a composite illustration and does not represent any specific organization's system. Companion code is MIT licensed.