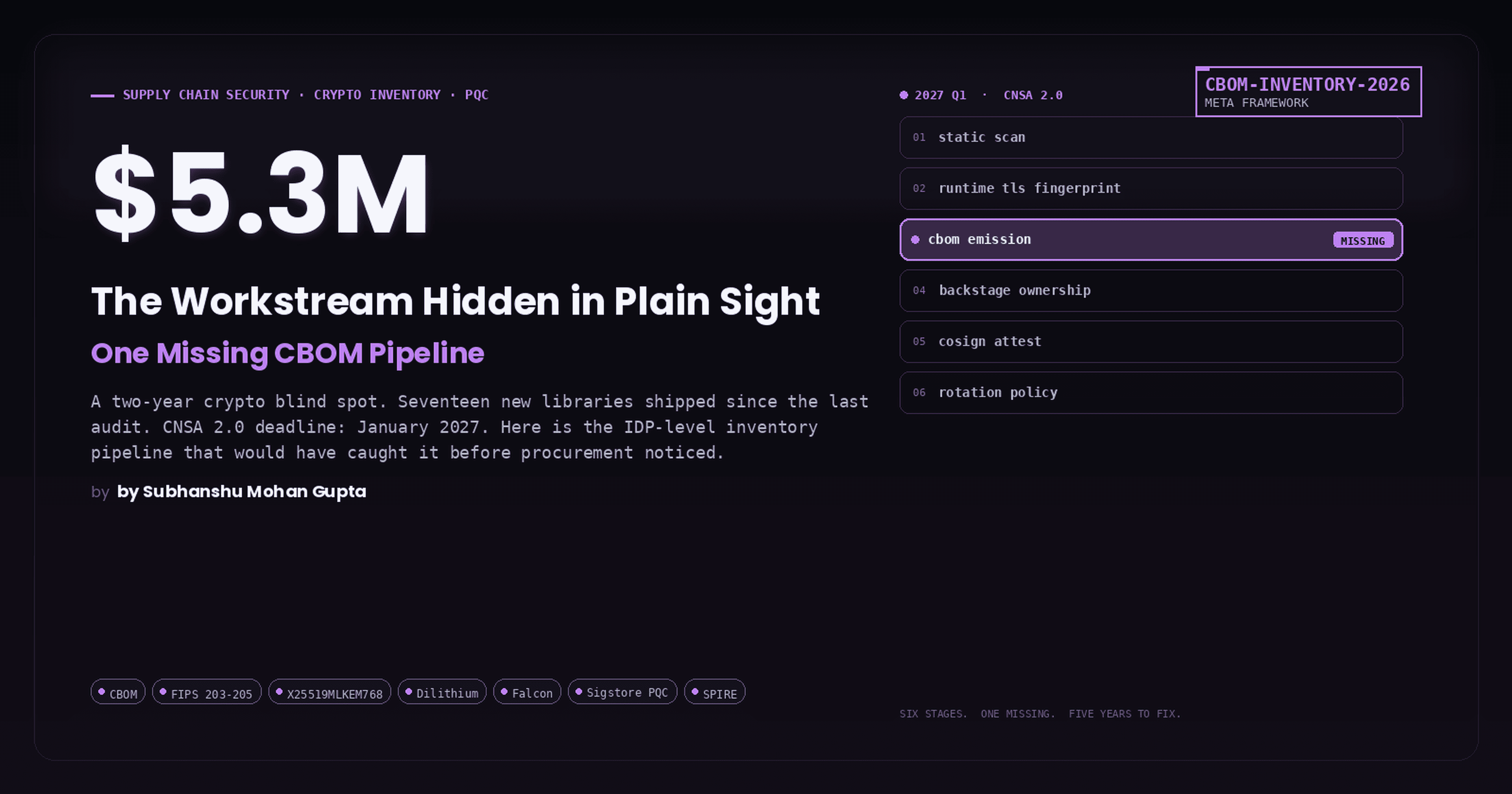

The $4.45M Mistake: How a Missing SBOM Requirement Let the XZ Utils Backdoor Slip Past Millions of Servers

A 3-year patient infiltration. One 500ms anomaly. Zero automated defenses. Here's the full architecture of what happened, what should have stopped it, and how to build a self-healing supply chain security system into your IDP, today.

A versatile DevSecOps Engineer specialized in creating secure, scalable, and efficient systems that bridge development and operations. My expertise lies in automating complex processes, integrating AI-driven solutions, and ensuring seamless, secure delivery pipelines. With a deep understanding of cloud infrastructure, CI/CD, and cybersecurity, I thrive on solving challenges at the intersection of innovation and security, driving continuous improvement in both technology and team dynamics.

The XZ Utils backdoor (CVE-2024-3094) nearly became the most devastating supply chain attack in history; a patient, three-year social engineering campaign that embedded a remote code execution backdoor into a compression library used by virtually every Linux system. Only a 500-millisecond performance anomaly noticed by a single developer prevented what could have been a universal skeleton key to every SSH server on the internet. This guide dissects exactly how the attack worked, then builds a complete self-healing supply chain audit system, with real code, that would catch it.

The XZ Utils incident exposed a fundamental truth: open-source supply chain security cannot rely on human vigilance alone. Automated SBOM generation, cryptographic signing, and policy enforcement at every pipeline gate are now non-negotiable. What follows is a comprehensive architecture and implementation guide for building these defenses into an Internal Developer Platform (IDP) with working GitHub Actions workflows, OPA Rego policies, Cosign signing, Kyverno admission control, and Tekton pipeline integration.

The XZ Utils attack: a masterclass in patient infiltration

Think of it like a bank heist movie

Before diving into the technical details, consider this analogy. Imagine a small-town bank with a single, aging security guard, dedicated but overworked and underpaid. A con artist shows up, starts volunteering at the bank, doing helpful tasks for free. Meanwhile, fake customers start complaining loudly that the guard is too slow, too unreliable, and should retire. After two years of helpfulness, the con artist gets their own set of keys. They don't rob the bank immediately. Instead, they install a hidden mechanism in the vault door, one that only opens with their specific fingerprint, invisible to everyone else. They hide this mechanism inside replacement parts for the vault's air ventilation system, something nobody thinks to inspect. If a random engineer hadn't noticed the vault door was taking half a second longer to open, every bank in the world running the same vault model would have been silently compromised.

That is, almost literally, what happened with XZ Utils.

Three years of social engineering

The attacker, operating as "Jia Tan" (GitHub: JiaT75), created their account on January 26, 2021 and submitted their first innocuous patch, an .editorconfig file, on October 29, 2021. Over the next year, they submitted legitimate, helpful contributions to build trust with the sole maintainer, Lasse Collin.

The pressure campaign began in April 2022. Sock puppet accounts, "Jigar Kumar" and "Dennis Ens", bombarded the xz-devel mailing list with complaints about slow maintenance. Kumar wrote: "Progress will not happen until there is new maintainer. The current maintainer lost interest or doesn't care to maintain anymore." Collin, who had publicly acknowledged struggling with mental health issues, responded: "I haven't lost interest but my ability to care has been fairly limited mostly due to longterm mental health issues... It's also good to keep in mind that this is an unpaid hobby project."

The pressure worked. Key milestones in the takeover:

June 2022: Collin acknowledges Jia Tan as "practically a co-maintainer already"

September 2022: Jia Tan gives authoritative release summaries

November 2022: Bug report email changed to shared alias; README updated to list "the project maintainers Lasse Collin and Jia Tan"

January 2023: Collin tags his final release (v5.4.1)

March 2023: Jia Tan tags their first release (v5.4.2) and takes over oss-fuzz contact

June 2023: "Hans Jansen" (another suspected persona) introduces GNU Indirect Function (IFUNC) support, the exact mechanism the backdoor would later exploit

July 2023: Jia Tan disables IFUNC in oss-fuzz builds, preventing the fuzzer from detecting the backdoor

Brian Krebs confirmed that Jigar Kumar, Dennis Ens, and Hans Jansen's email addresses never appeared anywhere else on the internet, not even in data breach databases, strongly indicating purpose-built sock puppet accounts.

The technical mechanism: five layers deep

The backdoor was architecturally elegant and terrifyingly well-hidden, operating through a five-stage chain.

Stage 1: Payload hidden in binary test files.

On February 23, 2024, Jia Tan committed two binary "test" files: tests/files/bad-3-corrupt_lzma2.xz and tests/files/good-large_compressed.lzma. The repository already noted that test files were "created by hand with a hex editor", making opaque binary additions appear routine. These files contained the x86-64 object code payload. Neither file was referenced by any actual test.

Stage 2: Modified build system activates the payload.

The release tarballs (but not the git repository) included a malicious build-to-host.m4 file. This is critical: release tarballs routinely include auto-generated build files absent from git, so the divergence went unnoticed. The m4 macro injected an obfuscated shell script into the configure process that checked preconditions (x86-64 Linux, GCC, GNU linker, building as deb/rpm) and then extracted the payload using a chain of sed, tr, head, and xz commands:

sed rpath ../../../tests/files/bad-3-corrupt_lzma2.xz | \

tr "\t \-_" " \t_\-" | xz -d | /bin/bash

The extracted payload was compiled into liblzma_la-crc64-fast.o and linked into liblzma.

Stage 3: IFUNC hijack of RSA_public_decrypt.

The payload used the GNU Indirect Function mechanism (introduced months earlier by "Hans Jansen's" patches) to replace the IFUNC resolvers for crc32_resolve() and crc64_resolve(). During process startup, before the GOT was remapped read-only, the malicious resolver parsed in-memory symbol tables, installed an audit hook into the dynamic linker, and waited for RSA_public_decrypt@plt to be resolved. When found, it redirected the function to the backdoor code and uninstalled the hook to cover its tracks.

Stage 4: The sshd, libsystemd, liblzma chain.

OpenSSH does not directly depend on liblzma. However, many Linux distributions (Debian, Ubuntu, Fedora) patch sshd to support systemd notification via sd_notify, linking sshd against libsystemd, which transitively depends on liblzma. The dependency chain sshd -> libsystemd -> liblzma (backdoored) loaded the malicious code directly into the SSH daemon's address space.

Stage 5: Remote code execution gated by Ed448 cryptography.

The hooked RSA_public_decrypt extracted a payload from the RSA public modulus in SSH certificate authentication (fully attacker-controlled), decrypted it with a hardcoded ChaCha20 key, and verified a digital signature using a hardcoded Ed448 public key. Only the holder of the corresponding Ed448 private key could trigger execution. If verified, the payload was passed directly to system(), achieving unauthenticated remote code execution. As Filippo Valsorda stated: "It's RCE, not auth bypass, and gated/unreplayable."

The use of Ed448 (rather than the common Ed25519), the multi-year campaign, and sophisticated anti-analysis countermeasures (detecting TERM, LD_DEBUG, rr, checking argv[0] for /usr/sbin/sshd, an environment variable kill switch yolAbejyiejuvnup=Evjtgvsh5okmkAvj) all point to likely state-sponsored activity.

Discovery: 500 milliseconds saved the internet

Andres Freund, a Microsoft principal engineer and PostgreSQL committer, discovered the backdoor while doing routine micro-benchmarking on Debian sid. He noticed SSH logins consuming unexpectedly high CPU and taking ~0.8 seconds instead of ~0.3 seconds, a 500ms anomaly. He also encountered Valgrind errors related to liblzma. As he wrote on the oss-security mailing list on March 29, 2024: "After observing a few odd symptoms around liblzma on Debian sid installations over the last weeks (logins with ssh taking a lot of CPU, valgrind errors) I figured out the answer."

The CVE received a CVSS score of 10.0, the maximum. Affected versions were 5.6.0 (released February 24, 2024) and 5.6.1 (released March 9, 2024). Only bleeding-edge distributions had adopted them: Fedora 40/Rawhide, Debian unstable/testing, openSUSE Tumbleweed, and Kali Linux. No stable production distribution was compromised. Red Hat issued an emergency advisory: "Immediately stop using Fedora 40 or Fedora Rawhide."

No automated security tool, code review process, or CI/CD pipeline caught this attack. It was pure human accident.

What an SBOM is and why it matters now

A Software Bill of Materials (SBOM) is a machine-readable inventory of every component, library, and dependency in a software artifact, essentially a nutritional label for code. Executive Order 14028 (May 2021) mandated SBOMs for federal software procurement, and the NTIA defined seven minimum data fields: supplier name, component name, version, unique identifiers (PURL/CPE), dependency relationships, SBOM author, and timestamp.

Two dominant formats compete. SPDX (Linux Foundation, ISO/IEC 5962:2021) excels at license compliance with file-level and snippet-level granularity. CycloneDX (OWASP, ECMA TC54) is security-focused with native VEX support, vulnerability fields, and component pedigree tracking. Both are supported by major tools. For supply chain security, CycloneDX's prescriptive design and built-in vulnerability exchange make it the stronger choice; for license-heavy regulated industries, SPDX's ISO standardization wins.

Five SBOM signals that would have flagged XZ Utils

An SBOM alone would not have prevented CVE-2024-3094. But systematic SBOM monitoring would have raised multiple alarms:

New maintainer detection. SBOM metadata tracks producers and suppliers. The transition from Lasse Collin to "Jia Tan" as release author, especially on a single-maintainer project with OpenSSF Scorecard risk scores of ~5.4/10, would have triggered review in any organization monitoring maintainer changes on critical dependencies.

Modified build scripts absent from source. The malicious

build-to-host.m4existed only in release tarballs, not in git. Comparing SBOMs generated from source checkout versus release tarball would reveal unexplained divergences. StepSecurity's Harden-Runner analysis showed multiple anomaloussedcommands modifying the Makefile during 5.6.0 builds, a clear behavioral anomaly.New binary files in a source repository. The payload was hidden in binary test files that were not exercised by any test. SPDX's file-level analysis capability could flag new opaque binaries, and build-process SBOM diffing between versions would detect the additions.

Unexpected transitive dependencies. Binary SBOM analysis of sshd would reveal linkage to liblzma despite OpenSSH's source having no such dependency. The discrepancy between source-level and binary-level dependency graphs is a critical signal.

Rapid incident response. Organizations with SBOMs could instantly query "which systems contain xz-utils 5.6.0 or 5.6.1?", answering in minutes rather than days. Wiz demonstrated this with their agentless SBOM inventory; Sumo Logic showed SPDX JSON queries identifying affected hosts immediately.

The honest assessment: SBOMs are necessary but not sufficient. They must be combined with reproducible builds, maintainer vetting, behavioral analysis, and automated policy enforcement, which is exactly what we build next.

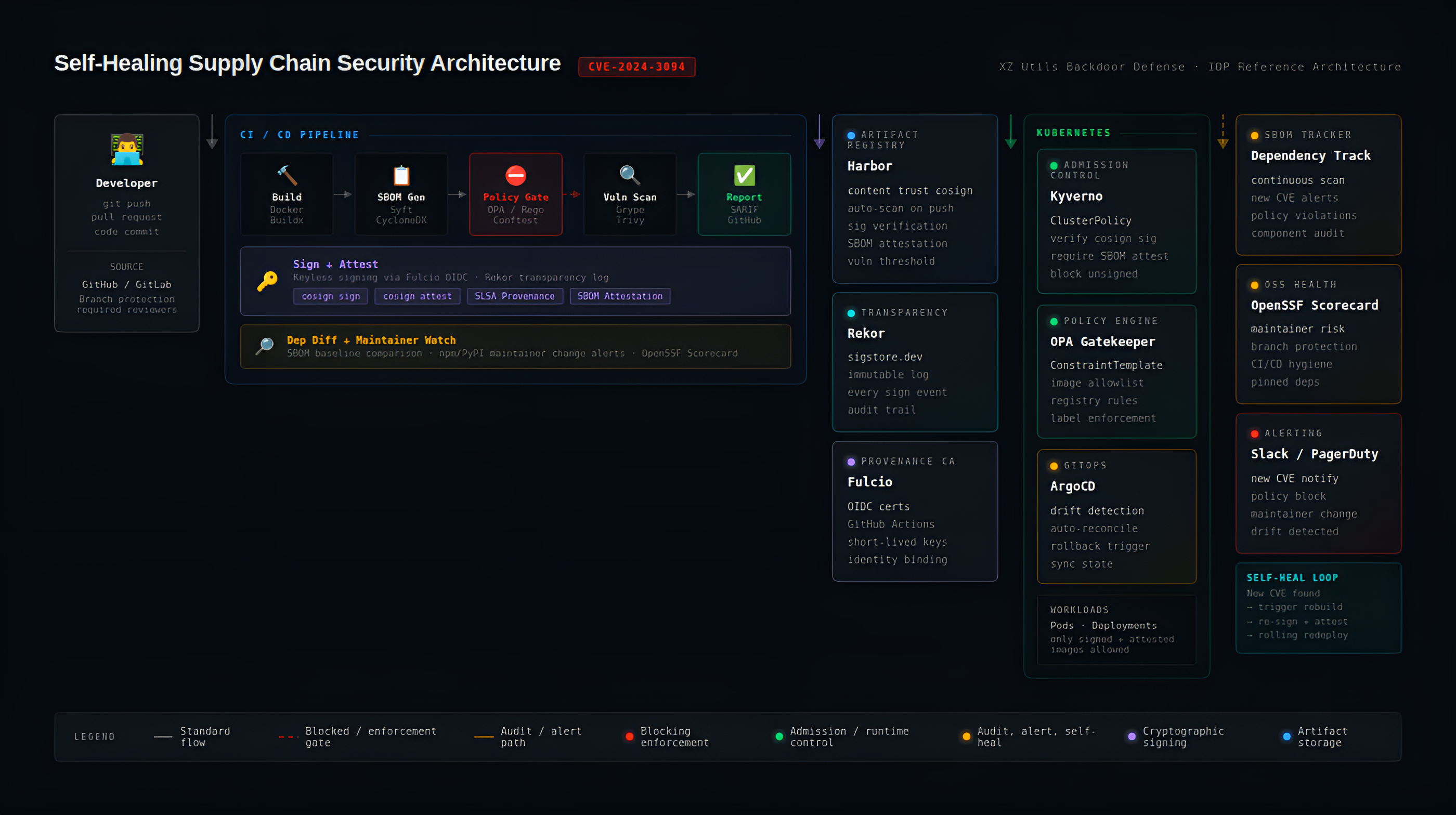

Architecture of a self-healing supply chain audit system

The system operates as a defense-in-depth pipeline with six enforcement points, each independently capable of blocking compromised artifacts.

The core components:

SBOM generation (Syft) produces CycloneDX/SPDX inventories at build time

Policy engine (OPA with Rego, Conftest) evaluates SBOMs against blocklists, license rules, dependency drift, and completeness requirements

Vulnerability scanning (Grype, Trivy) fails builds on critical/high CVEs

Cryptographic signing (Cosign/Sigstore) provides keyless signatures via Fulcio OIDC certificates and records every signing event in the Rekor transparency log

Artifact registry (Harbor) stores images with attached signatures, SBOM attestations, and enforces pull-time policies

Admission control (Kyverno or sigstore/policy-controller) blocks unsigned or un-attested images at the Kubernetes API server

Drift detection (ArgoCD, continuous rescanning, Dependency-Track) detects newly discovered vulnerabilities in already-deployed artifacts and triggers automated remediation

The self-healing aspect comes from three feedback loops: (1) scheduled vulnerability rescanning that triggers rebuilds when new CVEs affect deployed images, (2) GitOps reconciliation that reverts unauthorized manual deployments, and (3) admission control that prevents bypassing the pipeline entirely.

Step 1: GitHub Actions workflow with full supply chain security

This workflow builds a container image, generates an SBOM, scans for vulnerabilities, signs the image, and attests the SBOM, all with keyless Sigstore.

name: Supply Chain Security Pipeline

on:

push:

branches: [main]

pull_request:

branches: [main]

env:

REGISTRY: ghcr.io

IMAGE_NAME: ${{ github.repository }}

jobs:

build-sign-scan:

runs-on: ubuntu-latest

permissions:

contents: read

packages: write

id-token: write # Required for Sigstore OIDC keyless signing

security-events: write # Required for SARIF upload

steps:

# Checkout

- name: Checkout repository

uses: actions/checkout@v4

# Install Supply Chain Tools

- name: Install Cosign

uses: sigstore/cosign-installer@v3

- name: Install Syft

uses: anchore/sbom-action/download-syft@v0

# Docker Build and Push

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Login to GHCR

uses: docker/login-action@v3

with:

registry: ${{ env.REGISTRY }}

username: ${{ github.actor }}

password: ${{ secrets.GITHUB_TOKEN }}

- name: Extract Docker metadata

id: meta

uses: docker/metadata-action@v5

with:

images: \({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}

tags: |

type=sha,format=long

type=semver,pattern={{version}}

- name: Build and push image

id: build-and-push

uses: docker/build-push-action@v6

with:

context: .

push: true

tags: ${{ steps.meta.outputs.tags }}

labels: ${{ steps.meta.outputs.labels }}

# Generate SBOM with Syft

- name: Generate SBOM

uses: anchore/sbom-action@v0

id: sbom

with:

image: \({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}@${{ steps.build-and-push.outputs.digest }}

format: spdx-json

output-file: sbom.spdx.json

# Policy Check with Conftest/OPA

- name: Install Conftest

run: |

LATEST=$(wget -qO- "https://api.github.com/repos/open-policy-agent/conftest/releases/latest" | grep '"tag_name"' | sed -E 's/.*"v([^"]+)".*/\1/')

wget -q "https://github.com/open-policy-agent/conftest/releases/download/v\({LATEST}/conftest_\){LATEST}_Linux_x86_64.tar.gz"

tar xzf conftest_${LATEST}_Linux_x86_64.tar.gz

sudo mv conftest /usr/local/bin/

- name: Run SBOM policy checks

run: conftest test sbom.spdx.json --policy policy/

# Vulnerability Scan with Grype

- name: Scan for vulnerabilities

uses: anchore/scan-action@v7

id: scan

with:

sbom: sbom.spdx.json

fail-build: true

severity-cutoff: critical

output-format: sarif

- name: Upload SARIF report

uses: github/codeql-action/upload-sarif@v3

if: always()

with:

sarif_file: ${{ steps.scan.outputs.sarif }}

# Sign Image with Cosign (Keyless)

- name: Sign container image

run: |

cosign sign --yes \

\({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}@${{ steps.build-and-push.outputs.digest }}

# Attest SBOM

- name: Attest SBOM to image

run: |

cosign attest --yes \

--predicate sbom.spdx.json \

--type spdxjson \

\({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}@${{ steps.build-and-push.outputs.digest }}

# Verify Everything

- name: Verify signature

run: |

cosign verify \

--certificate-identity-regexp="https://github.com/${{ github.repository }}/*" \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

\({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}@${{ steps.build-and-push.outputs.digest }}

- name: Verify SBOM attestation

run: |

cosign verify-attestation \

--type spdxjson \

--certificate-identity-regexp="https://github.com/${{ github.repository }}/*" \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

\({{ env.REGISTRY }}/\){{ env.IMAGE_NAME }}@${{ steps.build-and-push.outputs.digest }}

Key details: id-token: write is mandatory for keyless Sigstore signing; it mints the OIDC token that Fulcio uses to issue a short-lived certificate. The --yes flag is required in CI for non-interactive acceptance. All image references use digest (@sha256:...), never tags, to prevent TOCTOU attacks.

Step 2: OPA Rego policies for SBOM enforcement

These policies run via conftest test sbom.json --policy policy/ in the CI pipeline. Place them in a policy/ directory.

Denied packages blocklist

# policy/denied_packages.rego

package main

import future.keywords.contains

import future.keywords.if

import future.keywords.in

denied_packages := {

"pkg:npm/event-stream",

"pkg:npm/flatmap-stream",

"pkg:pypi/jeIlyfish",

"pkg:generic/xz-utils@5.6.0",

"pkg:generic/xz-utils@5.6.1",

}

deny contains msg if {

some component in input.packages

purl := component.externalRefs[_].referenceLocator

purl in denied_packages

msg := sprintf("BLOCKED: package '%s' (purl: %s) is on the deny list", [component.name, purl])

}

License compliance

# policy/license_compliance.rego

package main

import future.keywords.contains

import future.keywords.if

import future.keywords.in

prohibited_licenses := {

"GPL-2.0-only", "GPL-2.0-or-later",

"GPL-3.0-only", "GPL-3.0-or-later",

"AGPL-3.0-only", "AGPL-3.0-or-later",

"SSPL-1.0",

}

deny contains msg if {

some pkg in input.packages

license := pkg.licenseConcluded

license in prohibited_licenses

msg := sprintf("LICENSE VIOLATION: '%s' uses prohibited license '%s'", [pkg.name, license])

}

Dependency drift detection

# policy/dependency_drift.rego

# Load baseline: conftest test sbom.json --policy policy/ --data baseline.json

package main

import future.keywords.contains

import future.keywords.if

import future.keywords.in

deny contains msg if {

data.approved_packages

some pkg in input.packages

name_version := sprintf("%s@%s", [pkg.name, pkg.versionInfo])

not name_version in data.approved_packages

msg := sprintf("NEW DEPENDENCY: '%s' not in approved baseline; review required", [name_version])

}

warn contains msg if {

not data.approved_packages

msg := "No baseline loaded; dependency drift detection skipped"

}

SBOM completeness check

# policy/sbom_completeness.rego

package main

import future.keywords.contains

import future.keywords.if

deny contains msg if {

not input.spdxVersion

msg := "SBOM missing required field: spdxVersion"

}

deny contains msg if {

not input.creationInfo

msg := "SBOM missing required field: creationInfo"

}

deny contains msg if {

input.creationInfo

not input.creationInfo.created

msg := "SBOM missing required field: creationInfo.created (timestamp)"

}

deny contains msg if {

not input.packages

msg := "SBOM has no packages; appears empty"

}

deny contains msg if {

count(input.packages) == 0

msg := "SBOM packages array is empty"

}

deny contains msg if {

some i, pkg in input.packages

not pkg.name

msg := sprintf("Package at index %d missing required field 'name'", [i])

}

deny contains msg if {

some i, pkg in input.packages

not pkg.versionInfo

msg := sprintf("Package '%s' missing required field 'versionInfo'", [pkg.name])

}

Step 3: Kubernetes admission control with Kyverno

Verify Cosign keyless signatures on all images

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: verify-image-signatures

annotations:

policies.kyverno.io/title: Verify Image Signatures

policies.kyverno.io/category: Supply Chain Security

policies.kyverno.io/severity: high

spec:

validationFailureAction: Enforce

background: false

rules:

- name: verify-keyless-signature

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "ghcr.io/my-org/*"

attestors:

- entries:

- keyless:

subject: "https://github.com/my-org/*/.github/workflows/*"

issuer: "https://token.actions.githubusercontent.com"

rekor:

url: https://rekor.sigstore.dev

Require SBOM attestation on all deployed images

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-sbom-attestation

annotations:

policies.kyverno.io/title: Require SBOM Attestation

policies.kyverno.io/category: Supply Chain Security

policies.kyverno.io/severity: high

spec:

validationFailureAction: Enforce

background: false

webhookTimeoutSeconds: 30

rules:

- name: check-sbom-attestation

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "ghcr.io/my-org/*"

attestations:

- type: https://spdx.dev/Document

attestors:

- entries:

- keyless:

subject: "https://github.com/my-org/*/.github/workflows/*"

issuer: "https://token.actions.githubusercontent.com"

rekor:

url: https://rekor.sigstore.dev

conditions:

- all:

- key: "{{ spdxVersion }}"

operator: Equals

value: "SPDX-2.3"

Alternative: sigstore/policy-controller ClusterImagePolicy

apiVersion: policy.sigstore.dev/v1beta1

kind: ClusterImagePolicy

metadata:

name: keyless-sbom-required

spec:

images:

- glob: "ghcr.io/my-org/**"

authorities:

- keyless:

url: https://fulcio.sigstore.dev

identities:

- issuer: https://token.actions.githubusercontent.com

subject: "https://github.com/my-org/my-repo/.github/workflows/build.yml@refs/heads/main"

ctlog:

url: https://rekor.sigstore.dev

attestations:

- name: must-have-sbom

predicateType: https://spdx.dev/Document

policy:

type: cue

data: |

predicateType: "https://spdx.dev/Document"

Step 4: Dependency diff alerting and maintainer change detection

Add this job to your GitHub Actions workflow to detect suspicious dependency changes:

dependency-audit:

runs-on: ubuntu-latest

if: github.event_name == 'pull_request'

steps:

- name: Checkout PR

uses: actions/checkout@v4

- name: Checkout base

uses: actions/checkout@v4

with:

ref: ${{ github.base_ref }}

path: base

- name: Generate SBOMs for diff

run: |

# Generate SBOM for current PR

syft scan dir:. -o spdx-json=pr-sbom.json

# Generate SBOM for base branch

syft scan dir:./base -o spdx-json=base-sbom.json

- name: Dependency diff analysis

run: |

#!/bin/bash

set -euo pipefail

# Extract package names and versions from both SBOMs

jq -r '.packages[] | "\(.name)@\(.versionInfo // "unknown")"' base-sbom.json | sort > base-deps.txt

jq -r '.packages[] | "\(.name)@\(.versionInfo // "unknown")"' pr-sbom.json | sort > pr-deps.txt

# Find new dependencies

NEW_DEPS=$(comm -13 base-deps.txt pr-deps.txt)

REMOVED_DEPS=$(comm -23 base-deps.txt pr-deps.txt)

if [ -n "$NEW_DEPS" ]; then

echo "::warning::New dependencies detected:"

echo "$NEW_DEPS"

echo ""

echo "Review these additions for supply chain risk."

fi

if [ -n "$REMOVED_DEPS" ]; then

echo "::notice::Dependencies removed:"

echo "$REMOVED_DEPS"

fi

# Flag high-risk patterns (like the XZ Utils attack signals)

echo "$NEW_DEPS" | while read -r dep; do

PKG_NAME=\((echo "\)dep" | cut -d'@' -f1)

echo "Checking scorecard for: $PKG_NAME"

done

- name: OpenSSF Scorecard check

uses: ossf/scorecard-action@v2.3.1

with:

results_file: scorecard-results.sarif

results_format: sarif

publish_results: true

For maintainer change detection specifically, integrate with package registry APIs:

#!/bin/bash

# maintainer-check.sh; detect maintainer changes on npm packages

# Run periodically or in CI when lockfiles change

LOCKFILE="package-lock.json"

ALERT_WEBHOOK="${SLACK_WEBHOOK_URL}"

jq -r '.packages | to_entries[] | .value.resolved // empty' "$LOCKFILE" | \

grep -oP '(?<=registry.npmjs.org/)[^/]+' | sort -u | while read -r pkg; do

# Fetch current maintainers from npm

CURRENT=\((npm view "\)pkg" maintainers --json 2>/dev/null | jq -r '.[].name' | sort)

CACHED="./maintainer-cache/${pkg}.txt"

if [ -f "$CACHED" ]; then

PREVIOUS=\((cat "\)CACHED")

if [ "\(CURRENT" != "\)PREVIOUS" ]; then

echo "Maintainer change on $pkg"

echo " Previous: $PREVIOUS"

echo " Current: $CURRENT"

# Send alert

curl -s -X POST "$ALERT_WEBHOOK" \

-H 'Content-type: application/json' \

-d "{\"text\":\"Maintainer change detected on \`\(pkg\`\nPrevious: \)PREVIOUS\nCurrent: $CURRENT\"}"

fi

fi

mkdir -p ./maintainer-cache

echo "\(CURRENT" > "\)CACHED"

done

Step 5: Tekton pipeline with Chains for automatic provenance

The Tekton pipeline mirrors the GitHub Actions workflow but runs on Kubernetes. Tekton Chains automatically generates SLSA provenance and signs it.

Chains configuration

# Install Tekton Pipelines and Chains

kubectl apply -f https://storage.googleapis.com/tekton-releases/pipeline/latest/release.yaml

kubectl apply -f https://storage.googleapis.com/tekton-releases/chains/latest/release.yaml

# Configure Chains for SLSA v1 provenance with OCI storage

kubectl patch configmap chains-config -n tekton-chains -p='{"data":{

"artifacts.taskrun.format": "slsa/v2alpha3",

"artifacts.taskrun.storage": "oci",

"artifacts.oci.storage": "oci",

"transparency.enabled": "true"

}}'

# Generate signing keys for Chains

cosign generate-key-pair k8s://tekton-chains/signing-secrets

SBOM generation Task

apiVersion: tekton.dev/v1beta1

kind: Task

metadata:

name: generate-sbom

spec:

description: Generate CycloneDX SBOM with Syft

params:

- name: IMAGE

type: string

workspaces:

- name: source

results:

- name: SBOM_PATH

steps:

- name: generate

image: docker.io/anchore/syft:v1.4.1

script: |

#!/usr/bin/env sh

set -ex

syft packages "$(params.IMAGE)" \

--output cyclonedx-json \

--file $(workspaces.source.path)/sbom.cdx.json

echo -n "\((workspaces.source.path)/sbom.cdx.json" > \)(results.SBOM_PATH.path)

Vulnerability scan Task

apiVersion: tekton.dev/v1beta1

kind: Task

metadata:

name: vulnerability-scan

spec:

description: Scan for vulnerabilities with Grype, fail on high+

params:

- name: IMAGE

type: string

- name: FAIL_ON

type: string

default: "high"

workspaces:

- name: source

steps:

- name: scan

image: docker.io/anchore/grype:v0.79.2

script: |

#!/usr/bin/env sh

set -ex

grype "$(params.IMAGE)" \

--fail-on $(params.FAIL_ON) \

--output cyclonedx-json \

--file $(workspaces.source.path)/vuln-report.json

Full Pipeline definition

apiVersion: tekton.dev/v1beta1

kind: Pipeline

metadata:

name: secure-build-pipeline

spec:

params:

- name: repo-url

type: string

- name: image-reference

type: string

workspaces:

- name: shared-data

- name: docker-credentials

tasks:

- name: fetch-source

taskRef:

name: git-clone

workspaces:

- name: output

workspace: shared-data

params:

- name: url

value: $(params.repo-url)

- name: build-push

runAfter: ["fetch-source"]

taskRef:

name: kaniko

workspaces:

- name: source

workspace: shared-data

- name: dockerconfig

workspace: docker-credentials

params:

- name: IMAGE

value: $(params.image-reference)

- name: generate-sbom

runAfter: ["build-push"]

taskRef:

name: generate-sbom

workspaces:

- name: source

workspace: shared-data

params:

- name: IMAGE

value: $(params.image-reference)

- name: vulnerability-scan

runAfter: ["generate-sbom"]

taskRef:

name: vulnerability-scan

workspaces:

- name: source

workspace: shared-data

params:

- name: IMAGE

value: $(params.image-reference)

Tekton Chains watches completed TaskRuns and automatically signs the provenance using the configured keys. This achieves SLSA Build Level 2 out of the box, and Level 3 with SPIFFE/SPIRE integration for non-falsifiable provenance.

Step 6: Harbor registry with policy enforcement

Configure Harbor to enforce signatures and trigger SBOM generation:

# Deploy Harbor with Trivy scanner (Helm)

helm repo add harbor https://helm.goharbor.io

helm install harbor harbor/harbor \

--set expose.type=ingress \

--set expose.ingress.hosts.core=harbor.example.com \

--set trivy.enabled=true \

--set persistence.enabled=true

# Configure project-level policies via Harbor API

# Require content trust (only signed images can be pulled)

curl -X PUT "https://harbor.example.com/api/v2.0/projects/myproject" \

-H "Content-Type: application/json" \

-u "admin:Harbor12345" \

-d '{

"metadata": {

"enable_content_trust": "true",

"enable_content_trust_cosign": "true",

"auto_scan": "true",

"prevent_vul": "true",

"severity": "high"

}

}'

With enable_content_trust_cosign set to true, Harbor will refuse to serve images that lack a valid Cosign signature. With prevent_vul and severity configured, Harbor blocks pulls of images with vulnerabilities at or above the threshold. The auto_scan setting triggers Trivy scans on every push, and Harbor stores SBOM attestations as OCI artifacts alongside images.

Testing the end-to-end pipeline

Verify every enforcement point works by testing both the happy path and failure cases:

# TEST 1: Happy path; signed, attested, clean image deploys

# Run the GitHub Actions pipeline on a clean image, then verify locally:

cosign verify \

--certificate-identity-regexp="https://github.com/my-org/.*" \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

ghcr.io/my-org/myapp@sha256:abc123...

cosign verify-attestation --type spdxjson \

--certificate-identity-regexp="https://github.com/my-org/.*" \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

ghcr.io/my-org/myapp@sha256:abc123...

# Deploy to Kubernetes; should succeed

kubectl run test --image=ghcr.io/my-org/myapp@sha256:abc123...

# TEST 2: Unsigned image; admission controller blocks it

# Push an unsigned image directly (bypassing pipeline)

docker push ghcr.io/my-org/unsigned-app:latest

# Attempt deploy; Kyverno should reject with:

# "image signature verification failed"

kubectl run test-unsigned --image=ghcr.io/my-org/unsigned-app:latest

# Expected: Error from server: admission webhook denied the request

# TEST 3: SBOM policy violation; pipeline fails at gate

# Add a blocked dependency (e.g., event-stream) to package.json

# Push to trigger pipeline

# Expected: conftest step fails with:

# "BLOCKED: package 'event-stream' is on the deny list"

# TEST 4: Critical vulnerability; Grype fails the build

# Use a base image with known critical CVEs

# FROM node:14 (has multiple critical CVEs)

# Expected: scan-action step fails with exit code 1

# TEST 5: Dependency drift; new package flagged for review

# Add a new dependency not in baseline.json

# Submit as PR

# Expected: warning annotation on the PR:

# "NEW DEPENDENCY: 'newpkg@1.0.0' not in approved baseline"

# TEST 6: Harbor blocks vulnerable image pull

docker pull harbor.example.com/myproject/vulnerable-app:latest

# Expected: Error: FORBIDDEN; image has critical vulnerabilities

Why would this architecture have caught the XZ Utils attack?

Each enforcement layer independently addresses a different aspect of CVE-2024-3094.

SBOM dependency drift detection would have flagged the new binary test files and the modified build scripts between xz-utils 5.5.x and 5.6.0 as unexpected changes requiring review. The baseline comparison policy would emit NEW DEPENDENCY warnings for any component changes.

Maintainer change alerting would have flagged the transition from Lasse Collin to Jia Tan as the sole release author. The OpenSSF Scorecard integration would have assigned low maintainer scores to a single-maintainer project with a recently added co-maintainer.

Reproducible build verification comparing SBOMs from git source versus the release tarball would have revealed the build-to-host.m4 file present only in tarballs, the exact mechanism used to inject the backdoor.

Binary SBOM analysis of sshd would have detected the unexpected transitive dependency on liblzma through libsystemd, flagging the attack surface that made SSH vulnerable to a compression library backdoor.

Cryptographic provenance via SLSA provenance and Cosign attestations creates an auditable chain linking every artifact to its source commit, builder identity, and build environment, making it far harder to inject malicious code without detection.

No single tool would have stopped this attack. But multiple independent enforcement points, such as SBOM diffing, maintainer monitoring, build reproducibility, admission control, and continuous rescanning, create a defense-in-depth posture where the attacker would need to compromise multiple systems simultaneously. That transforms a supply chain attack from a single point of failure into a distributed consensus problem that the attacker must solve, dramatically raising the cost and reducing the probability of success.

Conclusion

The XZ Utils backdoor was not a failure of technology; it was a failure of process. A single underfunded maintainer, no automated supply chain verification, and no policy enforcement between source code and production deployment. The technical sophistication of the attack (Ed448 cryptography, IFUNC hijacking, multi-year social engineering) was extraordinary, but the defense required is not.

The architecture described here, SBOM generation at build, OPA policy gates, Cosign signing, Kyverno admission control, and continuous drift detection, is implementable today with open-source tools. The key insight is that supply chain security is not a single gate but a continuous verification loop: generate, attest, verify, scan, re-scan, alert, remediate. Every step in the pipeline both produces and consumes cryptographic evidence, and every enforcement point operates independently.

The most important technical decision is to make the CI/CD pipeline the only path to production; no manual pushes, no exceptions, no tag-based references. Every image must be signed, every SBOM must be attested, and every deployment must be verified. The self-healing feedback loops ensure that even if a vulnerability is discovered after deployment, the system automatically detects it, alerts, and triggers remediation.

After XZ Utils, the question is no longer whether to implement supply chain security, but how fast you can deploy it.